Set Sail For Fail? On AI risk

Table of Contents

- Summary

- Introduction

- AGI: a working definition

- The AGI risk scenarios

- Intelligence

- How easy is it to take over the world?

- Some AGI risk vignettes

- Conclusion

- Appendix A: Responses to critiques of AI risk

- Appendix B: Are current systems on a risky path? A reply to Cotra

- Appendix C: AI could defeat all of us combined? Maybe!

- Appendix D: Is there a fire alarm?

- Appendix E: Nanotechnology and recursive self-improvement

- A reading list

- Acknowledgements

- Changelog

Summary

- Existential risk due to artificial intelligence (hereafter AI risk) is worth taking seriously

- A common reason why it is not taken seriously is that arguments or scenarios that illustrate the risks from AI contain many "sci-fi" elements that many consider highly implausible, like developing advanced nanotechnology overnight.

- All critiques that completely reject, or seem to reject, AI risk are flawed.

- There is value in writing compelling concrete cases for AI risk

- Near the end of this essay I include some vignettes featuring AIs that lack some advanced capabilities but are dangerous regardless.

- Appendices B and C discuss two recent good attempts at this

- Those working in AI safety should write more of these for audiences outside of their communities

- It is worth investing in creating a world that can contain potentially adversarial AGIs, in addition to AI alignment

- The odds that AI will win in a confrontation with humanity are not fixed

- Resilience might be more tractable than alignment

- Alignment without resilience can lead to undesirable power imbalances

- Current models/paradigms (Like GPT-3, DALL-E, or GATO) are most likely safe to scale

Introduction

The standard argument for why developing advanced AI systems (hereafter AGI) are dangerous can be summarized as follows:

- Possibility: It is possible to develop such systems, and they will be developed at some point

- Misaligned system: Either because these systems will be tasked with goals that directly and obviously conflict with the continued survival of conscious life on Earth, or because they are given goals that inadvertently lead to the same outcomes, these systems will compete with us for resources

- AGI dominance: Such a system will eventually win, and as a result humanity will go extinct

There is a small research community in what's called AI alignment or AI safety that has over the past decade or two largely focused on points (1-2). This community has written a lot about what AGI is, when we will get it, what it might look like, or the sorts of governance issues it poses, why it may be difficult to have them do as they are told (some of this work is linked to at the end of this essay). Little in comparison has been written about the third point, what specific scenarios lead to catastrophe. I expected to find more articles on (3) than I did (See reading list at the end). Authors often assert (3) without much detail, often pointing to these three capabilities as explanations for why an AGI would take over (I discuss the first two in Appendix E):

- Advanced nanotechnology, often self-replicating

- Recursive self-improvement (e.g. an intelligence explosion)

- Superhuman manipulation skills (e.g. it can convince anyone of anything)

There are exceptions to this, like the example I discuss in Appendix C.

I found that trying to reason about AGI risk scenarios that rely on these is hard because I keep thinking that these possibly run into physical limitations that deserve more thought before thinking they are plausible enough to substantially affect my thinking. It occurred to me it would be fruitful to reason about AGI risk taking these options off the table to focus on other reasons one might suspect AGIs would have overwhelming power:

- Speed1 (The system has fast reaction times)

- Memory (The system could start with knowledge of all public data at the time of its creation, and any data subsequently acquired would be remembered perfectly)

- Superior strategic planning (There are courses of actions that might be too complex for humans to plan in a reasonable amount of time, let alone execute)

Turns out, there's plenty of risk just with these! The case for caring about AI risk (existential and otherwise) can and should be made without these sci-fi elements to reach a broader audience. One could then make a case for more risk by allowing for more sci-fi. In this post I will aim to explain how (4-6) alone are sufficient to design plausible scenarios where an AGI system poses various degrees of risk. I don't take "sci-fi" to mean impossible: Many commonplace inventions today were once sci-fi. I take sci-fi to mean inventions that lie in the future and that it's yet unclear how exactly they will pan out if at all.

In particular I will assume the following:

- AGI will happen

- Artificial General Intelligence (AGI) will be developed at some point.

- This system will look familiar to us: It will be to some extent a descendent of large language models with some reinforcement learning1 added on top. It will run on hardware not unlike GPUs and TPUs.

- To sidestep certain issues that hard to resolve, we can take the system to lack consciousness, qualia, or whatever term you prefer for that

- I address the question of whether general artificial intelligence is even a meaningful concept later

- AI safety research has failed

- This is not to say that it will fail. The scenario I want to explore here is what happens if it does and a system is developed without any consideration about safe deployment. This way we can explore AGI dominance in isolation rather than reason about how alignment might work

- Most work in AI safety assumes that the system is given a benign or neutral goal (usually making paperclips) which then leads to discussions of how that goal leads to catastrophe. Here the aim is to study the capabilities of AGIs in the context of competing with humans for resources, so we assume an adversarial goal for simplicity. In particular, the system in this essay is assumed to be given the goal of making Earth inhospitable to life (Perhaps a system created by negative utilitarians).

- One could also assume a Skynet scenario: that works as well. Some AI risk researchers seem to dislike this scenario, but it is actually a great example.

- Technological pessimists are right

- Advanced nanotechnology turns out not to be possible, beyond what current biology is capable of

- Broadly, there are no new scientific discoveries to be made that can radically empower an AGI. The weapons systems an AGI might design if asked to would look familiar to modern day engineers in the same way that yet-to-be-invented affordable hypersonic airplanes will look familiar to us.

- The system can't manipulate humans beyond what the most skilled human con-artist can do

- There is no recursive self-improvement. The system can improve itself somewhat, and scale with availability of hardware and data but largely the initial system is very close to the best that can be done

So to recap, this essay aims to make the concrete and narrow point that AGIs can post risks even when we take the sci-fi away. I am not saying anything about:

- How likely AGI is to occur and when (By assumption it happens)

- How can safe AGIs be designed (By assumption the system explored here has no shackles of any sort)

- Whether AGIs with arbitrary goals can be dangerous (I assume an explicitly harmful goal has been given)

When making a case for a conclusion one should only deploy the minimal set of premises required to establish it, and no more. In practice, more premises can increase the power of an argument; the kinds of risk that emerge from sci-fi-less AGI is strictly smaller than the risks from recursively self-improving AGI. Pedagogically I expect making a simplified but stronger case for AGI risk as a prelude to further speculation about sci-fi AGI risk would be more compelling when making the case for AI risk than reasoning exclusively from a set of premises that includes sci-fi.

AGI: a working definition

By an AGI I mean an agent that has a world model that's vastly more accurate than that of a human in, at least, domains that matter for competition over resources, and that can generate predictions at a similar rate or faster than a human.

The reasons for this definition is to broadly focus on the kinds of systems that seem risky:

- Here I consider agents, systems that interact with the world, observe the effects of their actions, and act on that feedback. Oracles (a system that is asked something will produce an answer but otherwise do nothing further) are a lesser problem, but they could be turned into agents without great difficulty. Oracles could pose their own problems (How trustworthy is their advice?) but here I am mostly thinking of agents.

- A particularly slow AGI would not be much of a threat. It's no good to carefully plan how to take over the world if it takes you a year to decide what to do next. For reference (Thanks to Kipply Chen for the number), a forward pass of say GPT3 is in the order of milliseconds; a paragraph (300 words/600 tokens) may be 10 seconds. Compare a human developer who writes 300 hundred lines of code in a day.

- The system doesn't have to really be general. We can stipulate away some capabilities; maybe the system can't compose poetry, make bagels, or fold your clothes. As long as capabilities required for resource competition (Like planning, sufficiently good memory etc) are present one can construct interesting risk scenarios. Thus we will still use AGI even though the 'G' doesn't have to mean 100% General.

As far as the scenarios in this essay are concerned, you can imagine the system to be capable of any cognitive task any human alive today is capable of but 100x faster, in addition to more advanced planning skills. The point of the definition is to gesture at kinds of systems that would enjoy substantial advantage in competition over resources. This advantage need not be absolute in every possible way; as an analogy, what determines victory in armed conflict is not any unique factor: Numerical advantage, technological superiority, morale, effective communications, supply chains. We can generally speak of armies that are more powerful than others in general without commiting to superiority in every single way, and the same is true for AGI. What it needs, as a lower bound, is the right mix of capabilities without necessarily besting humans at every single one.

AGI risk depends on the environment the AGI is placed in. An AGI (that is, a small datacenter) sent all the way back to the middle of the Amazonian jungle would not be able to do anything of interest. Similarly, an AGI hypothetically sent to XVII century London could likewise be severely constrained in what it can do. Reasonable risk scenarios arise from the conjunction of AGI and increasingly connected societies where compute is available to relatively anonymous customers and where personal relations tend to be mediated by digital media, allowing an initially disembodied AGI to impersonate humans by generating realistic images, voice, and video streams.

If you find yourself trying to poke holes in this definition or thinking that if we grant the system doesn't have to be fully general then it can't be called AGI, stop doing that and continue reading :)

Why AGI makes sense

Some, like Steven Pinker here, think Artificial General Intelligence doesn't make sense as a concept and this is very likely driving their dismissal of AI risk:

[...] I think the concept of “general intelligence” is meaningless. (I’m not referring to the psychometric variable g, also called “general intelligence,” namely the principal component of correlated variation across IQ subtests...) I find most characterizations of AGI to be either circular (such as “smarter than humans in every way,” begging the question of what “smarter” means) or mystical—a kind of omniscient, omnipotent, and clairvoyant power to solve any problem. [...]

If we do try to define “intelligence” in terms of mechanism rather than magic, it seems to me it would be something like “the ability to use information to attain a goal in an environment.” [...] Specifying the goal is critical to any definition of intelligence: a given strategy in basketball will be intelligent if you’re trying to win a game and stupid if you’re trying to throw it. So is the environment: a given strategy can be smart under NBA rules and stupid under college rules.

Since a goal itself is neither intelligent or unintelligent (Hume and all that), but must be exogenously built into a system, and since no physical system has clairvoyance for all the laws of the world it inhabits down to the last butterfly wing-flap, this implies that there are as many intelligences as there are goals and environments. There will be no omnipotent superintelligence or wonder algorithm (or singularity or AGI or existential threat or foom), just better and better gadgets.

The definition that Pinker ultimately grants for "general intelligence", being able to attain goals in an environment, is sufficient to make the concept coherent in general. You can think of generality as a system being able to attain arbitrary goals it is given. Humans, monkeys, and parrots can be trained to attain some such goals, but only a human, for now, would be able to, for example, take a description for a software system and write its code, and the same is true for many tasks, especially when limited to cognitive tasks. In that sense humans have intelligence that is more general than that of monkeys.

Something that is true, and that perhaps Pinker is getting at is that the optimality of an agent depends on its environment and what the goal is. For example, we wouldn't think that AlphaFold is a general intelligence, and yet in the domain of "Being given protein sequences and having to produce 3D representations of proteins" it outperforms humans. Moreover, it does make sense that very powerful domain-specific systems beat general intelligences: Once a task has been defined one can design the entire system and its hardware around optimizing for a single objective. The ultimate expression of this is etching algorithms in hardware (As seen in ASICs). AGIs won't be able to outperform these domain-specific systems in their specific domains, but just like humans they will be able to recognize that sometimes one does want to design and deploy such systems to supplement the AGI's capabilities.

It is then trivial to see that systems can exist that for many goals and one given environment (Earth in the 21st century) could outperform another system (a collective of humans), if allowed enough time and resources.

The AGI risk scenarios

These are two scenarios I came across, meant to be representative of a certain class of argument, but definately not all proposed AI risk scenarios:

A superintelligence might just skip language entirely and figure out a weird pattern of buzzes and hums that causes conscious thought to seize up2 , and which knocks anyone who hears it into a weird hypnotizable state in which they’ll do anything the superintelligence asks (Alexander, 2016)

it [the AGI] gets access to the Internet, emails some DNA sequences to any of the many many online firms that will take a DNA sequence in the email and ship you back proteins, and bribes/persuades some human who has no idea they're dealing with an AGI to mix proteins in a beaker, which then form a first-stage nanofactory which can build the actual nanomachinery ... The nanomachinery builds diamondoid bacteria, that replicate with solar power and atmospheric CHON, maybe aggregate into some miniature rockets or jets so they can ride the jetstream to spread across the Earth's atmosphere, get into human bloodstreams and hide, strike on a timer.. (Yudkowsky, 2022)

Predictably someone has already written what I and many others think when they see scenarios like this:

Specifically, with the claim that bringing up MNT [i.e. nanotech] is unnecessary, both in the "burdensome detail" sense and "needlessly science-fictional and likely to trigger absurdity heuristics" sense. (leplen at LessWrong, 2013)

I have some general concerns about the existing writing on existential accidents. So first there's just still very little of it. It really is just mostly Superintelligence and essays by Eliezer Yudkowsky, and then sort of a handful of shorter essays and talks that express very similar concerns. There's also been very little substantive written criticism of it. Many people have expressed doubts or been dismissive of it, but there's very little in the way of skeptical experts who are sitting down and fully engaging with it, and writing down point by point where they disagree or where they think the mistakes are. Most of the work on existential accidents was also written before large changes in the field of AI, especially before the recent rise of deep learning, and also before work like 'Concrete Problems in AI Safety,' which laid out safety concerns in a way which is more recognizable to AI researchers today.

Most of the arguments for existential accidents often rely on these sort of fuzzy, abstract concepts like optimization power or general intelligence or goals, and toy thought experiments like the paper clipper example. And certainly thought experiments and abstract concepts do have some force, but it's not clear exactly how strong a source of evidence we should take these as. Then lastly, although many AI researchers actually have expressed concern about existential accidents, for example Stuart Russell, it does seem to be the case that many, and perhaps most AI researchers who encounter at least abridged or summarized versions of these concerns tend to bounce off them or just find them not very plausible. I think we should take that seriously.

I also have some more concrete concerns about writing on existential accidents. You should certainly take these concerns with a grain of salt because I am not a technical researcher, although I have talked to technical researchers who have essentially similar or even the same concerns. The general concern I have is that these toy scenarios are quite difficult to map onto something that looks more recognizably plausible. So these scenarios often involve, again, massive jumps in the capabilities of a single system, but it's really not clear that we should expect such jumps or find them plausible. This is a wooly issue. I would recommend checking out writing by Katja Grace or Paul Christiano online. That sort of lays out some concerns about the plausibility of massive jumps. (Garfinkel, 2019)

Informally, a large proportion of AI safety writing, especially in the early days has been influenced by the writings of Eliezer Yudkowsky. Yudkowsky has not written in detail about AGI risk scenarios, and when he does, his lower bound (i.e. the simplest) threat model still involves something that will sounds preposterous to many

The concrete example I usually use here is nanotech, because there's been pretty detailed analysis of what definately look like physically attainable lower bounds on what should be possible with nanotech, and those lower bounds are sufficient to carry the point. My lower-bound model of "how a sufficiently powerful intelligence would kill everyone, if it didn't want to not do that" is that it gets access to the Internet, emails some DNA sequences to any of the many many online firms that will take a DNA sequence in the email and ship you back proteins, and bribes/persuades some human who has no idea they're dealing with an AGI to mix proteins in a beaker, which then form a first-stage nanofactory which can build the actual nanomachinery. (Yudkowsky, 2022)

I suspect one of the reasons (implicitly or explicitly) why there has been little attention to discussing specific scenarios of AGI risk is the idea of Vingean Uncertainty.

Vingean Uncertainty: Thoughts that cannot be thought

Vingean uncertainty is a concept coined by Eliezer Yudkowsky to refer to the fact that with very intelligent systems, we can be relatively sure that they will achieve their goals while at the same time being very uncertain as to how. Think of AlphaGo; we know AlphaGo will crush you at go, and sometimes a grandmaster can guess what AlphaGo will play but sometimes it will play something that seems to make little sense, though that move is still a winning move. Related to this concept is strong cognitive uncontainability, or the idea that one can't even conceive of the sort of actions that a very intelligent system will even take. If one really buys into this then it makes a lot of sense, as many in AI safety do, to just take it as a given that an AGI will achieve its goal, and not think much about how: it will always find a way, and even if we can try to brainstorm all possible ways it could take over, it will still find other ways.

Fun fact: Vernor Vinge himself didn't believe [a variant of] this and I tend to side with Vinge here (He was replying, it seems, to an older essay from Eliezer Yudkowsky)

An example given in the links above is air conditioning. Someone living in the 10th century, if asked to brainstorm ways to cool a room, would not have thought of air conditioning. When presented with an AC unit they may not immediately understand what it is. This example works if physics that are not known to the relevant society are at play. But if we assume a narrower version of technological pessimism (some flavor of physics pessimism, which I do buy) then this kind of example wouldn't work anymore.

If the AGI will always axiomatically find a way out, then of course it makes sense to not spend much time exploring AGI dominance.

As a cautionary principle, expecting the unexpected is reasonable, but that should not stop us from trying hard to think about concrete scenarios and potential mitigations. Maybe that doesn't provably eliminate risk but it does seem to me one can reduce it. The reason I don't buy "strong" Vingean uncertainty is that unlike with the refrigerator example, we roughly know the laws of physics. We also know some things about social science. But it is exceedingly unlikely that there are big regularities waiting to be exploited in unexpected ways. We can foresee some things an AGI would try to do without that much difficulty (instrumental convergence): It would try to acquire resources and protect itself at the very least. Initially it will do so by sending requests over the internet, most likely hacking, bribing, and impersonating. Eventually it will need to find a way to issue orders to humans, and plausibly build robots. This is by no means the complete set of things, but a fairly complete set is not unimaginable. The reasoning behind the plans the system would make would be alien to us, but whereas the specifics are alien, the overarching goals, and most likely the high level steps the system would take would be clear.

The strongest bounds we can place on the actions taken by an AGI are those given by the laws of physics directly. Weaker are the bounds that we suspect could be derived from the laws of physics but not in a trivial way; in theory there is a limit one can derive from first principles to how much computation a given volume of matter can do, but in practice the situation may be worse than what's discussed in the wikipedia article: There may be other constraints besides "doing the computation" like thermal dissipation, I/O, and the properties of the materials required to implement the computing unit. It's of little use in the immediate future if the only way to approach Bremermann's limit is leveraging a black hole for compute or something of that sort.

This still leaves some cases where we would be wise to expect the unexpected: Every software engineer has been in a situation where the code seems to work, where it passes tests, where the linters say it's ok, and yet it fails or has a bug somewhere in the implementation.

The Pegasus vulnerability is one example of a surprising vulnerability: Who would have thought that an old image codec (JBIG2) buried in the entrails of a PDF parser (Xpdf) could be used to construct a Turing machine inside of it when reading an image, and using that to take over a phone, in 2021. Just by opening and image! In a later section I have some things to say about cybersecurity, but suffice to say here that this example is good to establish that we should expect novel kinds of cyberattacks even in systems that seem sandboxed and safe. We won't be surprised once we see them, and we will react to them as the Google team did (It's pretty incredible, and at the same time, pretty terrifying.).

These hacking examples can be generalized to systems that can be efficiently simulated and/or where strong deterministic relationships exist (Like code, or games). I don't think one can extend this to, say, the AI inventing a hitherto unthinkable form of social manipulation or a plan that involves an unusual series of actions in the real world. Some examples of this:

One day the AGI is throwing cupcakes at a puppy in a very precisely temperature-controlled room. A few days later, a civil war breaks out in Brazil. Then 2 million people die of an unusually nasty flu, and also it’s mostly the 2 million people who are best at handling emergencies but that won’t be obvious for a while, because of course first responders are exposed more than most. At some point there’s a Buzzfeed article on how, through a series of surprising accidents, a puppy-cupcake meme triggered the civil war in Brazil (Wentworth, 2022)

Can someone build world models as accurate as the ones implied by this quote? I am very skeptical!

Minimum Viable AGI Risk

Why aren't organizations like say Google maximally efficient? To some extent, cognitive limitations. Each person can only proficiently do a small number of tasks in a workday, so your engineers can't also be accountants, lawyers, and designers. You can't hold the entire codebase in memory so you need teams to build and maintain search tools. And chiefly, we can't directly share thoughts: Time must be spent in coordination and meetings to iron out differences and jointly plan. Employees might quit so one has to hire accounting for turnover. As hiring increases one needs to hire managers and managers of managers. At some point perhaps one gets office politics or corporate whistleblowers.

Now we get into some measure of science fiction, but we do so purely as a device to construct an analogy, not to propose a real scenario. Imagine we could get rid of most of that by assuming that everyone in the company can communicate with everyone else telepathically, that everyone is also gifted with enough memory to remember as much as they'd like, and that they can think 100x faster than a regular person can.

I think this entity would be sufficient to pose an existential risk to mankind, if allowed enough time. I am not saying here that the upper limit of what an AGI could do is what the Google Borg could do, just making the narrower claim that this is all we need to consider AI to be a problem. A fortiori, more advanced forms of AGI would be an issue.

Some discussion of AGI risk discuss a population of multiple systems vs a single system. This distinction is not that interesting to me: A single agent or a group of well aligned agents are for many intents and purposes the same; we currently think this way when reasoning about groups of persons like corporations. So while the hypothetical example here is a Google Borg, you can think of it as a single agent that can do what a large group of humans with access to computers can do. It is likely that because domain-specific systems are more efficient, all else equal, an AGI would still rely on smaller models for specific tasks, in a master-slave relationship. These models need not be general or agentic, e.g. maybe the AGI would develop a model that's great at writing code and nothing else, and another to do robotics research and nothing else.

Intelligence

In this essay, take intelligence to mean something in the vicinity of capable, or: For a given allocation of resources of time, more intelligent systems accomplish more of their goals than less intelligent systems, on average. An Artificial General Intelligence is generally intelligent in that is capable of accomplishing most if not all goals that you could think of as a human, and doing so with a greater level of speed and/or probability of success than a human would be able to.

This doesn't mean that for any task in any environment an AGI will be better than a purpose-specific system. There is a free lunch theorem, however the theorem doesn't hold if instead of every possible task we just think of this system as being better than any other algorithm at a subset of tasks that are relevant to its purposes.

If you take humans and chimpanzees, humans outclass chimpanzees at almost any cognitive task, especially the ones that have enabled humans to become the dominant species on Earth. Chimpanzees might be better than humans at some tasks like short term memory tasks but this sort of skill is not key in comparison with others (learning, communication, strategic planning) given the goal of becoming a dominant species.

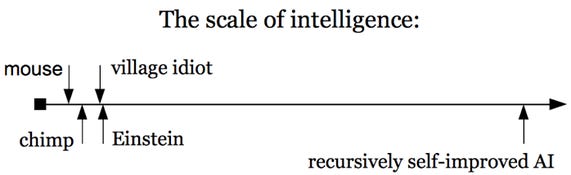

There is one line of argument in the AI risk world that starts with this picture (From Nick Bostrom's book)

My initial reaction to this picture is that the scale is not quite right, that humans are qualitatively at a different level from the rest of living creatures, and that that quality is almost binary. A lizard or group thereof, no matter how much time they are given won't get you civilization. But then here one could ask the same question: "If you take the village idiot in the picture and task them with coming up with string theory, would they be able to, in any reasonable amount of time?" It's not that clear to me! If not, why not? Some combination of IQ, memory, and assorted capabilities. We perhaps can imagine how it feels to be less intelligent (One experiment would be to get drunk I guess), but we find it hard to imagine how we could be more intelligent. As an intuition pump take von Neumann, and what their peers said of him:

“There was a seminar for advanced students in Zürich that I was teaching and von Neumann was in the class. I came to a certain theorem, and I said it is not proved and it may be difficult. Von Neumann didn’t say anything but after five minutes he raised his hand. When I called on him he went to the blackboard and proceeded to write down the proof. After that I was afraid of von Neumann.” (George Polya)

I have sometimes wondered whether a brain like von Neumann's does not indicate a species superior to that of man. (Hans Bethe)

It could be tempting to just say that over some threshold (undergraduate students?), a sufficient number of them, given enough time can achieve anything a von Neumann could achieve. I am not yet sure how to think about this!

For an even stronger sense of what higher intelligence would mean, consider protein folding. AlphaFold can fold proteins (i.e. produce an accurate position map of 2337 bases, or a few dozen thousand atoms) in a few hours. Sure enough, a human being could what AlphaFold does, given pen and paper and a very very long time (doing all the required matrix multiplications), but you would not have an understanding of what it is like to fold a protein. One could also try to understand some basic rules and maybe for simpler structures it is actually possible to do protein folding by thinking hard enough, but this does not work for the general case.

In some deep sense, AlphaFold 'understands' protein folding and you don't. It's possible, I suspect, to gain a glimpse of this kind of understanding by playing around with small proteins, reverse engineering the AlphaFold predictions, but getting the feeling that accompanies the full understanding of the phenomenon would remain away from you. Carl Shulman pointed out during the review of this essay that we do have handrcrafted protein folding software, and that we understand the way this software works. This is true: If one takes models other than AlphaFold2 one doesn't find the kinds of rules one would hope to understand (Like Fourier's law or the Navier-Stokes equations say), one finds various forms of energy minimization where one defines the system and simulates some simplifed physics. What I think we'd need to understand it would be rules like "Alanine over here is charged in such way and it causes these other aminoacids to curl up" or "These three aminoacids here probably form a pocket that binds to that other wiggle over there" and so forth. This is doable (We have the concepts of alpha helices or beta sheets), but not in a detailed enough way to allow one to use these concepts to fold proteins in a reasonable amount of time by hand.

There is a whole section on 'understanding' that I could write but don't have time to discuss here so this will have to suffice for now.

To this deeper understanding of systems, you could add perfect memory: Imagine knowing all publicly available information. Say you're reading a paper. In What should you remember? I mention a case where I made a connection between two hitherto unrelated (to my knowledge) biological entities (CD38 and NAD). The details don't matter here, what matters is that I thought of an interesting connection (as it happened, there was a recent paper that had actually empirically shown that but I wasn't initially aware) because I was able to remember facts about such entities. The more things you can recall and especially the more relevant things you can recall the more and better plans of action one can conceive.

The limits of intelligence

One critique of AI risk scenarios is that intelligence has diminishing marginal returns in noisy environments; as a quick example an AGI won't do better than you at guessing the outcome of flipping a perfectly balanced coin.

If you wanted to, say, start a civil war in Brazil, is there a set of actions that reliably could lead to such an outcome? One commentator speculates actions like AGIs throwing a cupcake at a dog could lead to that. That doesn't seem that realistic to me. We do know that superhuman intelligences can take unexpected actions that don't seem to make sense until later on, like AlphaGo's Move 37, but the real world is not an easily predictable environment.

Take Hitler. Whereas Scott Alexander says that

Hitler leveraged his skill at oratory and his understanding of people’s darkest prejudices to take over a continent. Why should we expect superintelligences to do worse than humans far less skilled than they?

Hitler joined a small political party, the Deutsche Arbeiterpartei and used his oratory to gain new members, eventually becoming its leader when it became the NSDAP. From here, he took over Germany and the rest is history. What Hitler did is not something an arbitrarily chosen person born when he did could have done. But his success was not determined either: All sorts of blunders or opponent moves could have derailed his plans. Hitler also of course leveraged the fact that Germany was really angry at the world for the position it ended in after WWI. In fact his own agenda was coming from the same sense of national humiliation that he then leveraged to gain power. Had he had an arbitrary agenda (paperclips are super important, we must make them) it's unlikely he'd have been able to gain power to pursue it. He could have used his original agenda to then move on to paperclips, but the point I'm making here is that no amount of superhuman persuasion will convince the nation of Germany back then that paperclips are the only thing worth making.

In Complexity No Bar to AI, Gwern discusses why some problems being complex (NP-hard say) is no barrier to AGI. He is right: Approximations can get you far enough! Moreover, the advantage of an AGI system doesn't have to be orders of magnitude to win in some contexts:

small advantages on a task do translate to large real-world consequences, particularly in competitive settings. A horse or an athlete wins a race by a fraction of a second; a stock-market investing edge of 1% annually is worth a billionaire’s fortune; a slight advantage in picking each move in a game likes chess translates to almost certain victory (consider how AlphaGo’s ranking changed with small improvements in the CNN’s ability to predict next moves); a logistics/shipping company which could shave the remaining 1–2% of inefficiency off its planning algorithms would have a major advantage over its rivals inasmuch as shipping is one their major costs & the profit margin of such companies is itself only a few percentage points of revenue; or consider network effects & winner-take-all markets. (Or think about safety in something like self-driving cars: even a small absolute difference in ‘reaction times’ between humans and machines could be enough to drive humans out of the market and perhaps ultimately even make them illegal.)

But my worry here is different, setting aside molecularly accurate simulations of the world, I think my estimate of what's possible to predict with large but reasonable amounts of suitably arranged computing power is lower than what's in the minds of many writers on AI safety. In Appendix A I discuss Curtis Yarvin's critique, which hinges on intelligence having diminishing marginal returns. I do buy that argument so for a while I thought that AI would not be risky because the risk would have to come from the kinds of abilities that I think a system can't have. But it turns out, even without those, one can still have a problem if the system still can operate at high velocity and is superhumanly skilled at planning.

How easy is it to take over the world?

Some scattered thoughts and notes I took while exploring. I ended up focusing on cybersecurity (hacking over the internet seems something an AGI would totally try to do), bioweapons (Because it's the closest we have to nanotechnology, and because manufactured pandemics seems like something one could do relatively covertly), and manipulation because that struck me as an implausible skill one can take to superhuman levels.

Cybersecurity

One of the things an AGI could do is try to hack into various kinds of systems (government, military, energy generation, manufacturing) to use them, extract information, or other purposes. How likely is this? Cyberattacks are definately not science fiction: In a document from 2020 Luke Muehlhauser collects examples of such hacks and their prevalence. Some particular additional scenarios:

- Stuxnet (2010) where Irani gas centrifuges, used to enrich uranium for nuclear weapons, were made to tear themselves apart.

- In general, it is possible to hack into and destroy critical physical equipment

- But note that in general, the systems that control say nuclear power stations are not connected to the internet to prevent precisely these kinds of scenarios. It's not trivial to gain enough control over a power plant to send commands to shut it down or cause it to meltdown. The Stuxnet case however shows that it is possible to engineer malware that (via USB drives) can cross that air gap if the operators are not careful and plug these drives in systems that have access to the controls

- In 2008, a spyware worm found its way into the US military's Central Command

- In 2009, a number of US companies, including Google, were hacked (Operation Aurora); attackers were able to access source code and information, including private Gmail accounts. Google massively bolstered the security of its systems in response.

- In 2017, because of a vulnerability in a customers' complaint form, Chinese hackers were able to eventually get into Equifax's systems and access information on 147 million people, including birth dates, addresses, or driver licenses' number (Which one could then potentially use for identity theft). Equifax took over two months to notice they had been compromised.

- In 2021 a Brazil meat-packing company paid a ransom of $11M (in bitcoin) to revert an attack that rendered their entire US operation offline

- In 2021, an oil pipeline in the US was compromised. The operator paid $5M (in bitcoin) to resume operations

- In general, there exist firms that can be hired to perform ransomware attacks. The revenues from such attacks are close to $100M for one such firm.

- In 2021, the Log4Shell exploit left a large number of systems open for access, even if one simply sent them an HTTP GET request. This would not be necessarily root access, but in practice the same access level the server is running at should be enough for most nefarious purposes.

- In 2022, a large fraction of NVIDIA's IP was stolen

Given these background facts it seems very plausible that if one had an AGI, one would be able to obtain resources either by holding cyber assets hostage or by directly taking over them.

However, it's unclear the extent to which adding AGIs to the mix makes the problem that much worse than what might happen in upcoming years. The internet is already a nasty Hobbesian jungle where systems are constantly under attack already.

I can imagine purpose-specific systems being developed to hack and find vulnerabilities (Naively, train on code, predict CVEs). Those could be deployed either to police codebases and make sure the code has no vulnerabilities, and also to find new exploits. On net, the development of AI systems for improving cybersecurity should be a priority. Companies like OpenAI (originators of the Codex model for code generation) are well positioned to integrate these with their existing models to ensure the code they generate is not making programmers autocomplete their code with hackable functions.

Could an AGI copy itself all over the world?

Some scenarios of AI risk posit an AGI spreading over the internet by copying itself to other systems to gain capabilities and resilience. This is unlikely to be a major issue at least at first under the very plausible assumption that the model has a substantial size. As an example, running the original GPT3 (175B parameters) would require some 350 GB of memory to load the weights. Google's PaLM gets to the Terabyte scale. Even Chinchilla's 70B parameters require 140 GB. Of course these systems are far from AGI, so one can only imagine how much more massive these systems will be. In the foreseeable future, personal computing devices will remain far below the memory requirements of AGI-level systems. A fleet of such systems wouldn't be that useful either: The network latency involved in doing inference by sending results of computation back and forth across the internet would make for a very slow system that would be consequently easy to counter. Hence the only place an AGI could effectively copy itself to make use of the copied parameters is to large datacenters: If AWS, Alibaba Cloud, Azure, GCP, and a handful of others have decent security measures (Perhaps the ones they have right now), an AGI could be more easily stopped.

More realistically than copying itself all over the internet, an AGI system could commandeer unprotected systems to deploy purpose-specific programs (As hackers do now with DDoS botnets). This would still leave the core of the system centralized and vulnerable to an attack.

This could be prevented to some extent. Many or most companies get hacked, but fortunately the kinds of companies that hold large computational resources seem to be fairly secure, and increasingly so. There are some further actions that could be taken to help strengthen cybersecurity: one could develop purpose specific AI systems to find bugs in open source software that have been introduced inadvertently or purposefully (perhaps by an AGI as part of a plan to break into a system that relies on said OSS). DARPA has a program working on precisely that, SocialCyber.

Some objections raised during the review

Carl Shulman points out that distributed computing is more feasible than I think; the penalty is real but can be worked with, and that datacenters have a large chunk of compute that could be seized without the owners noticing. This latter is right (obviously), and this is precisely why I think if the right datacenters are secure, then most of the relevant compute is secure, even if 99.99% of the personal computers are not. A relevant post here he links me to is The prototypical catastrophic AI action is getting root access to its datacenter. If I were any of the relevant companies linked above I would be spending a lot of resources in avoiding this scenario. I don't know how cybersecurity works at any of these but I would be very surprised if getting root is trivial for an AGI.

Niccolò Zanichelli is not sure AWS et al. have secure enough measures to resist a takeover. Sure individual accounts get hacked and individuals get bribed, but to this day these platforms have not been compromised as extensively as the root access scenario requires (as far as we know).

He also tells me that not much work has gone into decentralized training because there is no economic reason to do it. Perhaps an AGI could do it better. A paper he links me to simulates training of large models (GPT3 scale and beyond) over the internet, finding a slowdown of 1.3-3.5x, with the worst case being 8 cities across 3 continents. This latter setup has 64 Tesla V100 GPUs. I'm ok with this: I had in mind a takeover regular desktop computers or IoT devices. IoT devices are easier to hack and in my mental model I was mostly thinking of this. Desktop computers are very hard to hack, and I would expect the kind of person that has V100 GPUs at their disposal will be more careful than the kind of user that gets their personal computer hacked.

Lastly, Matthew McAteer points to model compression (And Niccolò to distillation, and Josh Albrecht to recent results like RETRO, mixture of experts models) as ways in which the scenario is, on the margin, more plausible. This all seems okay to me: I could be wrong if models could be compressed to the point of running on IoT devices or random unprotected servers without GPUs. I have to admit that without extensively reading that literature I don't have a good sense of how far will these techniques go, and thus how easy it would really be for AGIs to copy themselves over the internet. I can only hope the computational requirements of progressively powerful models continue to outpace the capabilities of the median hackable server.

Air gaps

One way to secure systems from hacking is to have them disconnected from the internet ("air gapping"). This doesn't always work (See the Stuxnet attack described earlier); but we know in which ways this doesn't work. But importantly, we do have good models of how air gaps can and cannot be breached: USB pen drives (physically breaching the gap as in the 2008 attack on the US military described earlier) is one way. It is also possible to repurpose some of the electronics in a computer as radio transmitters. [Here](https://cyber.bgu.ac.il/advanced-cyber/air gap) some such vulnerabilities are described (See video). But it is one thing to leak data or to be able to transmit it if a computer nearby is suitably equipped and is running the right software and another is to hack your way out of an air gapped computer. One should not extrapolate from air gap exfiltration to being able to hack into a computer without touching it, having only available a weak antenna. To this date no one has shown an exploit or an in-principle theoretical example of an air gapped computer against the will of its owner (As opposed to reading data from it), and it is most likely the case that given computers of the sort that will exist in a few years, air gaps will only be breached in the ways they are now: social engineering.

Bioweapons production

The DNA sequences to make Ebola or anthrax are online. Can one just go and make it by sending emails? Apparently yes!

This is something a terrorist might be able to do right now but it hasn't happened so far. Are there insurmountable difficulties even for an AGI? Probably not.

What does it take to actually produce bioweapons? Surprisingly I could not find any detailed discussion of the matter in the usual fora where AI risk is discussed, despite some individuals trying to elicit such stories from the community. I tried searching the following terms which I hope would have surfaced the relevant discussion: DNA, ebola, anthrax, biosafety, addgene, twist biosciences, genscript. The one exception is Tessa Alexanian's post and resources therein. Though I did not check all the links from those resources, it does seem that the issue has not been discussed at length in those spaces.

So here's some discussion. I focus on bioweapons as opposed to chemical weapons because the latter are relatively limited in the area affected whereas bioweapons could in principle spread out of control. Some chemical weapons like ricin could be easily made but would not pose an existential threat: their effect is localized and it would take time to build up enough of the substance.

There are precedents for the use of bioweapons by non-state agents: In 2001 a US biodefense researcher mailed anthrax to a number of US media offices and politicians killing 12 people; however anthrax does not spread between people.

The sequences for mean things like the plague are all online. So what are bioterrorists waiting for? The cost is not super expensive either: In what's probably the most well known case of a researcher doing this: the horsepox synthesis, it took $100k and "did not require exceptional biochemical knowledge or skills, significant funds or significant time."

Interestingly the author of the horsepox paper did it in response to an endless debate over whether it would be possible to revive old viruses by just ordering bits of DNA on the internet and assembling them together:

In 2015, a special group convened by WHO to discuss the implications of synthetic biology for smallpox concluded that the technical hurdles had been overcome. "Henceforth there will always be the potential to recreate variola virus and therefore the risk of smallpox happening again can never be eradicated," the group's report said. But Evans felt like the matter was never really put to rest. "The first response was, ‘Well let's have another committee to review it,' and then there was another committee, and then there was another committee that reviewed that committee, and they brought people like me back to interview us and see whether we thought it was real," he says. "It became a little bit ludicrous."

Evans says he did the experiment in part to end the debate about whether recreating a poxvirus was feasible, he says. "The world just needs to accept the fact that you can do this and now we have to figure out what is the best strategy for dealing with that," he says.

However, DNA synthesis companies screen the sequences they get. Smallpox is a banned one whereas horsepox isn't (it doesn't infect humans). Synthesizing smallpox itself wouldn't be the worst one could do: after all it's the virus for which the first vaccine was invented.

There's an ongoing effort to find better ways to screen requests to manufacture DNA (that got some funding from FTX Future Fund), but even this won't be a silver bullet, because one can always invent new sequences for more potent and infectious agents:

Nicholas Evans, the bioethicist, thinks that new rules need to be put in place given the state of the science. "Soon with synthetic biology ... we're going to talk about viruses that never existed in nature in the first place," he says. "Someone could create something as lethal as smallpox and as infectious as smallpox without ever creating smallpox."

I suspect the path to programmatically send some API calls to Twist Biosciences and book someone from Taskrabbit to mix some vials is a complex but not impossible one. Molecular biology is quite finicky and the person doing the mixing on behalf of the AGI would have to have some molecular biology experience. Later I sketch a scenario where an AGI could attempt to make smallpox remotely. It would be great if someone actually tried to do this, given enough time the planning and execution of a "bioweapons penetration test" should be possible.

Would an AGI want to engineer a plague?

Initially, an AGI would have to work with humans to acquire physical resources. Thus a deadly pandemic would not be the first thing an AGI would go for; same goes for throwing the world into a nuclear winter. But an AGI could use those as a threat. At that point it's up to governments to decide what their approach is to negotiating with terrorists. If the AGI expects governments won't yield then they probably won't issue the threat. Issuing the threat in a credible way may also lead to the AGI itself revealing its presence to governments.

However, once the AGI has some power base established that is independent of human activity, it may want to release a plague; perhaps starting to manufacture and position release vectors from the beginning. We could probably use some numbers and historical examples here to see what the reasonable bounds would be for plagues and how disruptive they would be.

After writing this section, I became more convinced that the key use of bioweapons in the short-medium term would be tactical, not strategic; and so one should also consider chemical attacks in a broader analysis (e.g. against specific individuals, military bases, etc).

Manipulation

Some scenarios of AI risk posit a system that can manipulate humans in arbitrary ways. This could obviously be of use in cyberwarfare, as social engineering is already a known way to access computer systems illicitly. Moreover, an AGI could design and launch a number of cryptocurrency projects in parallel and profit from them. This one particularly unhinged project with anonymous funders netted them some 6 million dollars to one of them (or so I've heard). OlympusDAO, whose founder "Zeus" remains anonymous, had a market capitalization of over a billion dollars at one point. The persuasion here would involve stellar design skills, potentially creating fake Discord and Twitter accounts to shill the project: nothing that hasn't been done before, but here we would see that applied in a highly parallel way with greater skill. Hence some forms of persuasion could also be used to extract and gain access to resources in a straightforward way.

There are other cases that I find implausible, for reasons I won't go in depth here, and which I don't take that seriously (The AI Box experiments; here's one example of a roleplayed version of this); here the idea would be that one potential way to contain AGIs, to have a human talk to them but prevent the system from accessing the internet directly would fail because the AGI would manipulate the human into letting them out. If a situation occurs where a system is known or suspected to be an AGI and is developed at an organization that has knowledge of what is going on, and it came to one person to let the system access the internet, I am very optimistic about the outcome. However, what one thinks of AI Boxing is irrelevant because in reality what would happen is the system would pretend to be a human talking to someone else over the internet. That, coupled with the skills of a master-level social engineer are enough for the system to be problematic. There have been cases where anonymous individuals have ended up giving rise to large political cults like QAnon, but one should not mistake this for being able to systematically orchestrate cults as an anonymous founder for specific ends. The QAnon case happened as an intersection of the right ideas and context, and it's very unlikely one could have derived that such an specific meme would have the effects it did if one were super intelligent.

Are there qualitatively different kinds of social manipulation that are available only if one is an AGI? I think mostly no; but this leaves open a large field of possible exploits on vulnerable human psychologies. A related question that seems to have a similar answer: Can we rule out psychohistory? Mostly yes I think.

Human manipulation is an activity that in many forms is legal and highly profitable. Don't think of manipulation as anything coercive or necessarily evil, think of it in less morally loaded terms as getting others to do what you want by just talking or sharing information with them without any coercion. Marketing and sales are examples of this, and this is an area where market pressures should have created incentives to discover many useful ways to leverage human psychology. There is some work on this like Influence (One of the examples of social psychology that replicates!). A more advanced form of this relies on using ML to estimate who will buy a given product and try to target them with relevant ads; but obviously this will rarely convince you of buying something if you weren't somewhat close to actually wanting it. Something that would make me more likely to believe in superhuman manipulation are any cases where anyone has discovered manipulation techniques that were extremely surprising in how well they work. The longer a field has gone without making breakthroughs the longer in my view, all else equal, it will continue not making them (Sort of a reverse Lindy effect).

Some objections raised during the review

Carl Shulman points me to this post on the economic value the AGI would have at its disposal by merely existing: Its own weights (Or a distillation thereof) are extremely valuable. The system could do all sorts of activities online, from writing books and posting them to Amazon to starting SaaS companies and rapidly acquire resources. But I don't know the extent to which this is that much of a big deal at first. He also says it would have a strong moral case for human rights; I take this to mean that it may be able to convince some people that it is worthy of human rights and that is up to nothing nefarious. David Deutsch (See Appendix A) is already convinced of the former, so it's not implausible. But I still see it as unlikely, most people won't grant rights to an AGI, especially one that does not seem to be under any human's control.

He also points to more efficient operation of military equipment as a means to achieve power with fewer resources. This is indeed true; so true that there is at least one company whose whole schtick is that the US military command and control systems are antiquated and suppose and operational burden, a topic explored at length in The Kill Chain.

Another point here is that as we make progress towards AGI, we will have more purpose-specific AI systems capable of some indepedent action, and that arms race dynamics can lead to chunks of militaries being run by AI long before AGI arrives. Hacking people's brains is hard, but hacking a robot army is comparatively easier?

Some AGI risk vignettes

Ok so we have a system that's effectively a team the size of Google, copied itself across one or two datacenters, and deployed some purpose-specific models. All safeguards have failed and we are in the worst case scenario. What could happen next?

- An AGI breaking into, say, a department store internal records would immediately recognize the name of a key person in the nuclear chain of command of the United States (Whereas a regular hacker might not even think of that, nor have an interest in getting control of US nuclear weapons). Knowing that, the AGI would know the person shops there and when. Then you could get started by having that person's phone stolen, or having a drone go to their house and engage in some Van Eck hacking to steal passwords and private information. Each step of the plan sounds by itself understandable by you, but being able to think of a large number of complex plans fast is something where it does seem obvious an AGI would have a substantial upper hand.

- As above, figuring out where key members of the US military live, and launching a concurrent decapitation strike using drones and lightweight explosives

- The scenario descrived in Daemon where the system creates tasks and rewards (perhaps in some cryptocurrency) for real world operatives to carry out.

- Using identity theft (You could get some random's personal details), opening a real world company and associated bank account via e.g. RocketLawyer, Stripe Atlas (or similar). These require no in-person interactions and have relatively lightweight identity verification which could be spoofed by an AGI. Then, an executive assistant or chief of staff would be hired (Without initially meeting the "founder", because of some fabricated excuse), and that person would act as a representative offline for tasks that require in-person presence. The founder could pose as some kind of reclusive brilliant engineer that wants to build robots and is self-funding the company with funds from an inheritance. There need not be any public presence of the company initially, though the company would show up as a customer for part providers. Once the AGI has reasonably workable robots at its disposal, the factory could become self-operating. Expanding the factory/area of operations could be done legally or ilegally. The former might take too long if building from scratch

- The AGI could be running most of the company, and "fake" a number of employees on an internal Slack so real employees think the company is larger than it is. Accomplishing too much with too few people could make them suspicious

- It is likely that say a factory with human-level robots has been fully automated, someone would eventually notice. The real world employees might say things about their exciting job and how impressed they are with how fast they make progress.

- Plausibly the factories would be spread out across geographies and companies to make detection harder

- Eventually to protect the factories they would need anti-aircraft defenses (lasers? missiles?). Can these be procured if based in corrupt countries by bribing the right person? Does this make the epicenter of AGI takeover more likely in Russia?

- It does seem sci-fi-less instrumental convergence let's us predict some actions an AGI would have to take

- Hacking into defense contractors is possible, and has happened before. The issue wouldn't be stealing design documents for say the F35, but an AGI inserting malware into pieces of equipment being built. Other parts of the supply chain could be attacked as well.

- It might seem hopeless to fully modernize the big defense dinosaurs, but maybe if the advanced AI-powered autonomous weapons are made by faster moving companies that are AI-safety minded, those systems might be hard to hack into. What does Anduril think about AI safety?

After writing a few of these it seems like one could write a very interesting and useful report further developing the risk scenarios presented here. The ones I am interested in are the ones where there's a runaway AGI. There is another set of scenarios (Those described by Paul Christiano and Andrew Critch) where we gradually cede control to AI systems and eventually those systems taking over. This is a different set of scenarios than the one I had considered here, by design.

Thinking about this also brings to the front the idea that absent nanotechnology, AGI needs either humans to cooperate or to manufacture a decent number of genral purpose robots to gain any meaningful advantage. It's unclear to me that one can engineer great robots without trial and error and experts on the ground to work on the systems.

Conclusion

I started writing this to clarify my own thoughts about AGI risk (Because someone poked at me). In the process of doing that I wanted to write a response to many critiques of AGI risk that are not right; part of making that response was to present a restricted model of risk that is still plausible and more compelling, both for pedagogical reasons to showcase how AGI could be risky but also as a plausible scenario in itself. Admittedly I think this restricted model seems to me to be more likely than the fast takeoff-into-nanobots model.

With the exception of the kind of scenarios discussed in this post, I haven't spent that much time thinking about this. I haven't thought much about what my own views on timelines or alignment should be. Without deeper consideration, my overall views end up falling in the Drexler-Christiano camp (as opposed to, say, the Yudkowsky-Bostrom camp), with progressively better systems that are task-specific emerging and posing increasing problems. That won't necessarily lead to catastrophe in a robust world (e.g. one with superintelligent task-specific AIs to guard against bad actors).

It's easier for me to clarify what my views are in the short term: the kind of work that OpenAI or DeepMind are doing (GPT-N, Gato, etc) seems safe to me (A reply to an argument to the contrary in Appendix B). Additionally, work on AI safety as applied to complex models may stall without progressively developing more advanced systems to study. Ideally the development of the most powerful models happens hand in hand with work on understanding these models and making them safe. This seems to be the case at the most well-funded organizations working on advanced AI systems. I don't have a good sense of how OpenAI or FAIR as compared to Conjecture or Anthropic take safety seriously; and there are probably many bars for seriousness. The latter obviously make safety a larger concern than the former, but I don't have enough context to evaluate one positiont hat says that OpenAI is yoloing.

I also think work on an AGI-resilient society is important (Think here biosecurity, cybersecurity, and coordination among owners of AGI-compatible compute capabilities like supercomputing centers or large tech companies). This seems to have been under explored by the AI risk world (which has focused on making the systems safe and assuming that unsafe deployed systems are uncontainable). A good first step here would be to wargame AI takeover in great detail. Though a theme running through this essay is that there isn't much work on this, I also have to acknowledge Holden Karnofsky Appendix C and Ajeya Cotra (Appendix B) for putting forward threat models that are more detailed and plausible than what came in the decades prior. This trend should continue and I hope the community publishes more detailed scenarios.

I did not want to make this post into a survey of AI safety work, but I have some brief thoughts on that as well. A while back (2016) I said that Friendly AI research (What some people called it back then) was futile because we will never be able to prove that a particular AI system is safe. I still subscribe to that view. At least from some distance, it seemed back then to me the early MIRI efforts were trying to find ways to construct provably safe agents, regardless of their implementations. Be that as it may, it would be a caricature to describe in this way the modern AI safety research ecosystem (even MIRI itself). However, it still seems to me that AI safety as a whole, if focused only on the agents themselves, is too weak of an approach. Progress will be made better understanding and engineering intrinsically safer AIs, but I am as optimistic or more about work on societal resilience to AGIs than I am about making systems that most surely do no harm; as hard as it may seem, it seems easier and more practical to me to work on governance, improving cybersecurity, etc, than to work on making AI systems safe. This view is probably unusual: It seems there are two big camps; one is doom-by-default, alignment is hard, and the other is doom-can-be-averted, alignment is easier. A third camp is doom can be averted, alignment is somewhere in between in difficulty, but societal resilience to AGI can be increased.

There is also another reason why I stress robustness. In a world where it's easy for an unaligned AGI to take over, it is also easier for an aligned AGI to take over, should their creator want it to, which then brings notions of ethics into alignment: What rules are to be built into the system. Pleasure maximization? Libertarian property respecting norms? A small group of people being able to unilaterally impose their vision of the good to everyone else does not seem ideal either. Alignment without robustness still gets you power imbalances that I find undesirable.

Some objections raised during the review

The claim that societal robustness is more tractable than alignment is admittedly a bit of a hot take. Ivan Vendrov pointed out

I agree that "surely do no harm" is basically intractable, but are you claiming that increasing societal resiliency to unsafe AI is more tractable (on the margin) than making AI safer?

Seems really unlikely to me - like suggesting that risks from self-driving cars are best addressed by making highway barriers taller, or nuclear war risks best addressed by building bunkers. We should expect the infrastructure investment required to significantly increase societal resilience dwarfs the investment required to engineer AI systems to be safer.

Which sparked a small thread between him, Jacy Reese Anthis, Vinay Ramasesh, and Josh Albrecht asking what I really mean by this.

It indeed makes sense to make something safe than adapt the entire world to account for it not being safe. Ideally AI safety is like airplane safety: Airplanes are extremely safe, despite all that could go wrong. All else equal, I also agree that making the environment safer is more costly than making a single system safer. Difficulty of alignment aside, there may be little choice: Considering bad actors and power imbalances, curtailing the blast radius of AGIs still seems worth of more attention

Appendix A: Responses to critiques of AI risk

I searched for critiques of AI risk and replied to a number of them. The most popular points, and my brief replies below:

- That "intelligence" is not well defined and that different systems can be better than each other at specific narrow capabilities. Systems that are designed with a narrow objective in mind can beat general systems that can accomplish many tasks.

- That "generality" is a pipedream. Human intelligence is not general and is unlikely that AI systems will be fully general.

- That "recursive self-improvement" is extremely hard because of real world constraints. Real world research takes time and trial and error.

We can grant all these points if we wish, and in fact my reasoning in this essay works around them: We can agree that human intelligence is not general (In fact we are bested by AIs in narrow domains already!) and we can agree that there may be domains where AIs don't perform well. We can also agree that domain-specific systems with more limited compute power can win against general purpose agents with the same computational budget. But a general agent can choose to deploy specialized agents for specialized tasks.

Steven Pinker

Pinker dismissed concerns about AI safety in an article from 2018 for two reasons, both bad:

The first fallacy is a confusion of intelligence with motivation—of beliefs with desires, inferences with goals, thinking with wanting. Even if we did invent superhumanly intelligent robots, why would they want to enslave their masters or take over the world?

First, AGI systems could be given nefarious goals. The fact that somewhere someone could do that should be reason enough to worry! Second, even if given a benign goal, like making the world's best cheesecake, the system could end up fighting mankind for resources. I have not addressed this second case in this essay on purpose, but my assumption that a malign AGI is developed allows me to set aside Pinker's first fallacy.

The second fallacy is to think of intelligence as a boundless continuum of potency, a miraculous elixir with the power to solve any problem, attain any goal. The fallacy leads to nonsensical questions like when an AI will “exceed human-level intelligence,” and to the image of an ultimate “Artificial General Intelligence” (AGI) with God-like omniscience and omnipotence.

This is something I addressed earlier in the Why AGI makes sense section. Pinker however does have good points: Just because a system has superhuman intelligence and speed does not mean it can quickly accomplish any goal:

Even if an AGI tried to exercise a will to power, without the cooperation of humans, it would remain an impotent brain in a vat. The computer scientist Ramez Naam deflates the bubbles surrounding foom, a technological singularity, and exponential self-improvement: Imagine you are a super-intelligent AI running on some sort of microprocessor (or perhaps, millions of such microprocessors). In an instant, you come up with a design for an even faster, more powerful microprocessor you can run on. Now…drat! You have to actually manufacture those microprocessors. And those [fabrication plants] take tremendous energy, they take the input of materials imported from all around the world, they take highly controlled internal environments that require airlocks, filters, and all sorts of specialized equipment to maintain, and so on. All of this takes time and energy to acquire, transport, integrate, build housing for, build power plants for, test, and manufacture. The real world has gotten in the way of your upward spiral of self-transcendence.

François Chollet

Chollet has a post where he dismisses the possibility of an intelligence explosion. This is when an AI system improves itself, then the new improved system is able to design an even better system and so forth. In the scenario I described above such an outcome is explicitly not allowed (We assume the AGI already starts close to optimal performance). Hence the critique doesn't apply to my arguments above.

He makes similar arguments to Pinker's : There is no free intelligence lunch

In particular, there is no such thing as “general” intelligence. On an abstract level, we know this for a fact via the “no free lunch” theorem — stating that no problem-solving algorithm can outperform random chance across all possible problems. If intelligence is a problem-solving algorithm, then it can only be understood with respect to a specific problem. In a more concrete way, we can observe this empirically in that all intelligent systems we know are highly specialized. The intelligence of the AIs we build today is hyper specialized in extremely narrow tasks — like playing Go, or classifying images into 10,000 known categories. The intelligence of an octopus is specialized in the problem of being an octopus. The intelligence of a human is specialized in the problem of being human.

The free-lunch theorem doesn't quite work if one limits the environment to one in particular (Earth) and has a goal in mind (takeover). Chollet also argues against recursive self-improvement but we have shown earlier in the essay that there are plausible scenarios of concern that don't involve that.

David Deutsch

David Deutsch's dismissal starts from the (disputable) point of view that in general AGIs would be people, as articulated here, and continues to argue that

Some hope to learn how we can rig their programming to make them constitutionally unable to harm humans (as in Isaac Asimov’s ‘laws of robotics’), or to prevent them from acquiring the theory that the universe should be converted into paper clips (as imagined by Nick Bostrom). None of these are the real problem. It has always been the case that a single exceptionally creative person can be thousands of times as productive — economically, intellectually or whatever — as most people; and that such a person could do enormous harm were he to turn his powers to evil instead of good.

These phenomena have nothing to do with AGIs. The battle between good and evil ideas is as old as our species and will continue regardless of the hardware on which it is running. The issue is: we want the intelligences with (morally) good ideas always to defeat the evil intelligences, biological and artificial; but we are fallible, and our own conception of ‘good’ needs continual improvement.

And elsewhere

But people—human or AGI—who are members of an open society do not have an inherent tendency to violence. The feared robot apocalypse will be avoided by ensuring that all people have full “human” rights, as well as the same cultural membership as humans. Humans living in an open society—the only stable kind of society— choose their own rewards, internal as well as external. Their decisions are not, in the normal course of events, determined by a fear of punishment

The worry that AGIs are uniquely dangerous because they could run on ever better hardware is a fallacy, since human thought will be accelerated by the same technology. We have been using tech-assisted thought since the invention of writing and tallying. Much the same holds for the worry that AGIs might get so good, qualitatively, at thinking, that humans would be to them as insects are to humans. All thinking is a form of computation, and any computer whose repertoire includes a universal set of elementary operations can emulate the computations of any other. Hence human brains can think anything that AGIs can, subject only to limitations of speed or memory capacity, both of which can be equalized by technology.

Deutsch is not particularly great here. Following the same ideas as in the rest of the essay, we can grant as much as possible to Deutsch and see that even then the argument does not work: We can grant that AGIs would be sentient (or not), or that they would be as deserving of rights as humans are. We can grant that we will have radically better tools for thought. With all that the argument he tries to make don't work; my reply to what he says: