Was Planck right? The effects of aging on the productivity of scientists

Note: I am using Andy Matuschak's new Orbit project to add spaced repetition prompts to the blogpost to help you remember the content. Let me know what you think!

Scientists are getting older. Some have expressed concern at that fact. While the motivations for that concern are not always explicit, it usually boils down to two: One, a matter of fairness. Science getting older may mean that it's not making room for younger scientists; older scientists would be sitting in a limited number of chairs for life, so to speak. And two, a matter of efficiency, perhaps the dominant concern. This is the idea that science has a crucial need for young scientists in particular to come in untainted by prior knowledge and dogmas and provide a steady stream of breakthroughs at a rate higher than what their older peers can hope to accomplish.

Historically many scientists have subscribed to this latter view, from physicists to molecular biologists, some of whom indeed did carry out their breakthrough research when they were quite young. Einstein was 26 during his annus mirabilis, Newton was 23 during his and Darwin was 29 when he (after reading Malthus) conceived the theory of evolution by natural selection.

A new scientific truth does not triumph by convincing its opponents and making them see the light, but rather because its opponents eventually die, and a new generation grows up that is familiar with it (Planck)

I strongly believe that the only way to encourage innovation is to give it to the young. The young have a great advantage in that they are ignorant. Because I think ignorance in science is very important. If you’re like me and you know too much you can’t try new things. I always work in fields of which I’m totally ignorant… (Brenner)

A person who has not made his great contribution to science before the age of 30 will never do so. (Einstein)

Age is, of course, a fever chill that every physicist must fear. He’s better dead than living still when once he’s past his thirtieth year (Dirac)

A few naturalists, endowed with much flexibility of mind, and who have already begun to doubt on the immutability of species, may be influenced by this volume; but I look with confidence to the future, to young and rising naturalists, who will be able to view both sides of the question with impartiality. (Darwin)

Men of science ought to be strangled [at their sixtieth birthday] lest age should harden them against the reception of new truths, and make them into clogs upon progress, the worse, in proportion to the influence they had deservedly won (Huxley)

I do not expect my ideas to be adopted all at once. The human mind gets creased into a way of seeing things. Those who have envisaged nature according to a certain point of view during much of their career, rise only with difficulty to new ideas. It is the passage of time, therefore, which must confirm or destroy the opinions I have presented. Meanwhile, I observe with great satisfaction that the young people are beginning to study the science without prejudice, and also the mathematicians and physicists, who come to chemical truths with a fresh mind - all these no longer believe in phlogiston. (Lavoisier)

No mathematician should ever allow himself to forget that mathematics, more than any other art or science, is a young man's game (Hardy)

But is it true? Let's find out!

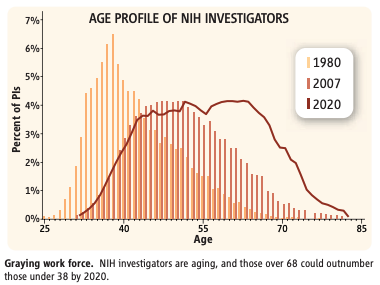

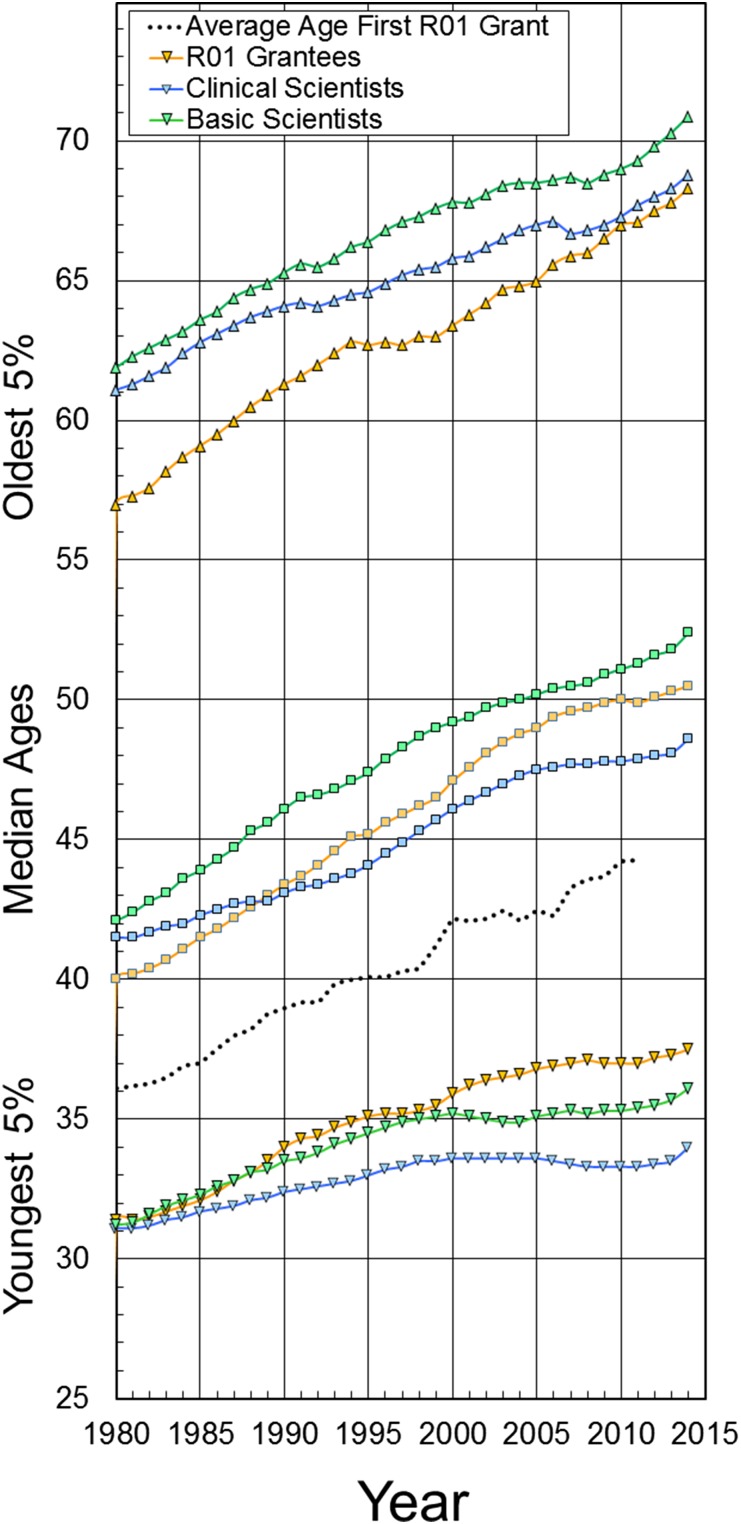

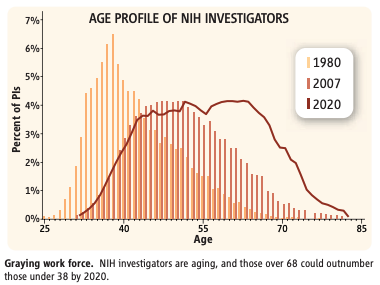

Scientists are getting older

One can look at the NIH in the US or at the scientific workforce in general, but the result is essentially the same, the scientific workforce is getting older. At NIH, the average age to obtain the first R01 grant in 2016 was 43 years, up from 35.7 in 1980. Note that this is for Pricipal Investigators and not everyone that is being funded by NIH grants (Including PhD students and postdocs that probably outnumber PIs by a factor of perhaps 5-6)

It doesn't matter how you slice the data, the oldest scientists that are getting grants are older now, and the youngest ones are not as young as they used to:

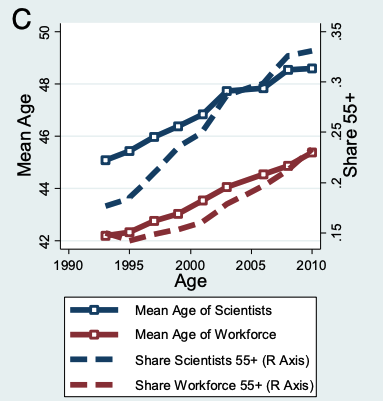

And furthermore it's not just NIH. The scientific workforce who has historically been older than the average worker in the US keeps getting older:

The reason for the scientific workforce getting older has four core explanations.

One is simply that the workforce at large is getting older due to a combination of reduced fertility rates and increased life expectancy. The immigration of foreign scientists to the US slightly improves the trend as they tend to be younger (Figure SI-20) but the effect is not sufficient to offset the broader trend.

The second explanation is that it used to be the case up until 1994 that professors in the US had to forcibly retire at age 70 (Europe continues this policy), instituting an artificial cap to the length of scientific careers and thus the average age (Here's one of the reports that led to the policy change; they found concerns about the productivity of older scientists unfounded). These two factors however don't really explain much of the increase, rather it's the retirement of boomers that accounts for the largest share of the effect in recent years (the third explanation).

First, we show that “demographic momentum” in the form of the aging of the large baby boom cohort has driven much of the recent rapid aging of the scientific workforce, and will continue to do so for the next two decades as the later cohorts of the baby boom pass through their 60s and early 70s. However, sharp declines since 1993 in the rate at which scientists retire from employment can account for 8% of the increase in the mean age of scientists. The decline in retirement was most likely triggered by the elimination of mandatory retirement at universities in 1994. We also find that the aging of the workforce as a whole (due to lower fertility) accounts for 13% of the increase in the mean age of the scientific workforce. Second, we show that the scientific workforce was very far from its implied steady-state age distribution when our analysis begins in 1993 (4.9 y younger on average). Strikingly, the scientific workforce remains far from steady state even as of 2008—current entry, exit, and transition rates imply that the mean age of the scientific workforce will increase by another 2.3 y from that level.

Once steady state is reached then we'll be back to plain population aging as the driving factor, leading to the fourth explanation (the burden of knowledge) that will be discussed later.

Does the establishment discriminate against young scientists?

Scientists are getting their first grants later. One possibility for why this may be is some kind of bias against younger scientists. This could come from their PI or from the academic community in general, or from the specific review committees that assign grants (study sections in the case of NIH). This discrimination may come in various forms. Older academics may discriminate against younger ones because they want to explore new ideas they don't approve of (They think are not good science), or because they haven't yet mastered the art of writing grants in the way they like it.

NIH does have some programs specifically targeted both at New Investigators (Those that seek their first grant) and Early Stage Investigators (ESI, Those that are within 10 years of getting their PhD) and has done so for years, constantly reviewing and tweaking specific awards targeted at these groups. Of particular note are the DP5 (Early independence Award), and the DP2 (New Innovator Award) grants, as well as the explicit policy to give special treatment to ESIs during their assessment by study sections (Here's what these look like).

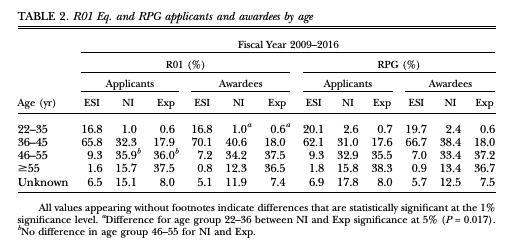

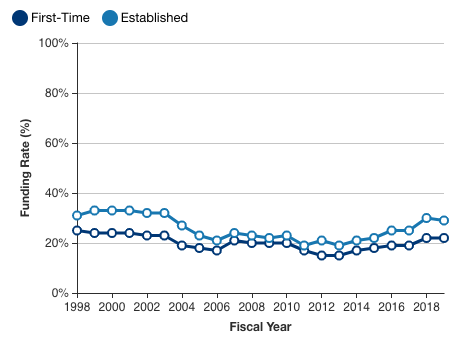

At a more granular level, one can also see that there is a rough correlation between how many scientists of an age group apply for a grant and how many get them (Here RPG is Research Project Grants, a category which includes R01 grants). This was not always the case, the success ratio of older PIs seemed to be higher in the past. If there is a bias here, it must be small.

When one splits the data into clinical science and basic science and restricts the analysis to the pre-2010 time frame, the success rate for these young basis science investigators is lower (Figure 5). So maybe there used to be substantial bias, but not any longer thanks to the NIH policies (Giving special consideration to ESIs during review is a practice that started in 2008)? That seems to depend on how you cut your data. The NIH has more recent data, and this shows that while there was a squeeze starting in 2004-2006, bringing together success for first time and established PIs, these rates have started to diverge again:

But note, this is NOT seen when broken down by age. What this means is that the bias is not against young investigators, but against new investigators (Who will often but not always be young), after all 15.7% of R01 applicants that were under the New Investigator category were over 55. A plausible explanation for this pattern is that grantsmanship is an art that has to be learned. Knowing what your study section likes takes time, and a newcomer may have trouble acquiring such knowledge. Moreover this bias may be specially problematic if those people that are new to a field (because they are young or they come from another field) are bringing in new ideas that can have a disproportionate effect on the field.

Why would a university hire a scientist proposing to undertake a novel research program after only a few years of postdoc training, when the institution could hire someone with several more years of training, many more publications, and a plan to continue an already productive research program?

So: If there's a bias, it's against newcomers that have to learn how to get money, not necessarily young people. So the workforce is getting older due to "natural" forces, not a bias that keeps young researchers out. That leads us to look at scientific productivity and its relation to age, but before do note that these results vary by institute. At NIDCR as well as the NCI (Table 1)the opposite holds: new investigators are twice as likely to get funding in the former case and 50% more likely in the NCI's case for ESIs.

An additional problem is that reviewers may use (consciously or unconsciously) reputation, prestige, or eminence as a criteria to approve a grant application. There is some rationality to this practice but it also means there's a Catch-22 for newcomers, they may not get grants because they are not known in the field, and they are not known in the fiel because they don't get grants to do reasearch in that field.

The increasing burden of knowledge

A reason why it takes longer to go from graduate education to being an academic is that it takes time to learn. We now know more than we used to! Benjamin Jones dubbed this issue the "burden of knowledge" back in 2009. Matt Clancy has a good explainer of this here, with some discussion on the effect of splitting this knowledge across multiple heads,

On the other hand, the burden of knowledge may, itself, make breakthroughs more difficult! As discussed in more detail in a previous newsletter, there is some evidence that teams are less likely to produce breakthrough innovations. This might be because it’s harder to spot unexpected connections between ideas when they are split across multiple people’s heads. In that case, the burden of knowledge can become self-perpetuating.

Azoulay et al. (2013) say that there is little we can do about it!

If increased knowledge burden is the most relevant prism through which we should interpret the pattern documented in figure 2, there would seem to be very little that scientific agencies in general, and the NIH in particular, could or should do to counteract this trend.

But this would be like saying Tesla "If lack of charging stations is a relevant reason why people don't buy electric cars there's little you can do" No! The right thing is to think if there are ways to reduce the time that is required to acquire such knowledge. Academic learning should not take that long. It can't take that long. To what extent can academic education be compressed I don't know, but from my own experience learning about various areas there are lots of inefficiencies in finding the right things to learn. Maybe the solution to the problem is a Wikipedia of science and the social norms required to keep it working, sort of like the SEP, a collection of rolling systematic reviews. Then add some SRS. Maybe one could embed prompts on papers so that are more easily remembered, and with more facts in memory, more potential for finding new connections. While these are interesting thoughts, this is not the place to pursue them, so I'll move ahead to the next section.

Do cognitive skills decline with age?

Intelligence

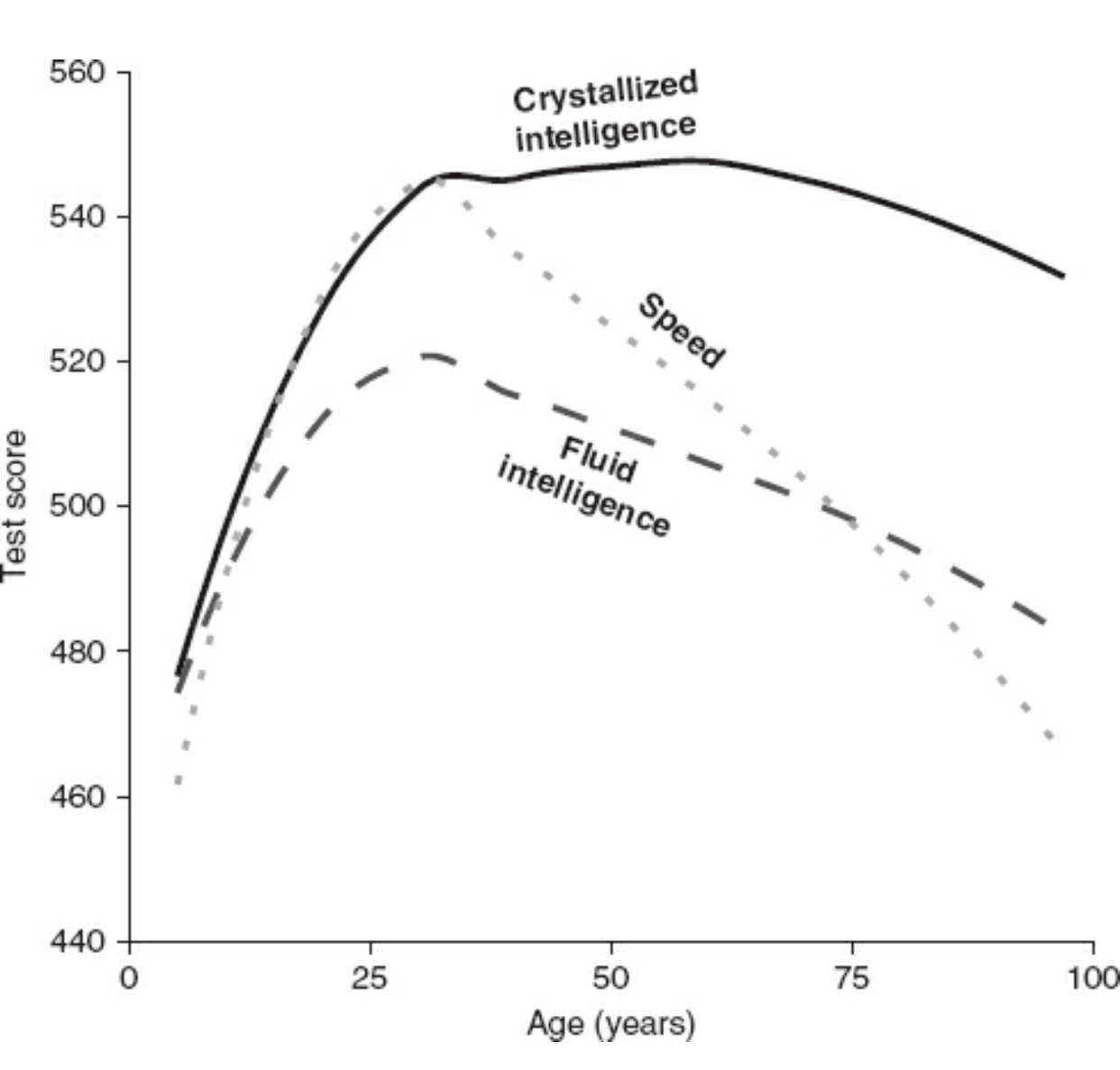

Shortly put, yes they do. They do so at different rates and this is key to understand some patterns of success by age in different fields.

First, looking at general intelligence, the accepted finding is that fluid intelligence starts declining in our 30s.

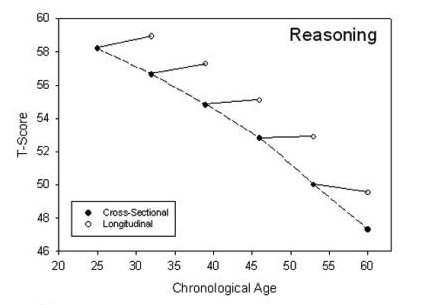

This finding has been hotly debated, with some researchers arguing that the decline doesn't happen until much later in life. Ultimately Stuart Ritchie, who I trust for this, prefers the early-decline model and so do I. The controversy boils down to the fact that over time cognitive skills have been improving: A 70 year old today has higher functioning than one born 50 years ago. If one pools data for people born at different times (a cross-sectional study), that background trend will distort the findings, making it seem like decline happens early. So we should track the same people, doing a cohort or longitudinal study, and see what happens over their lifetime. This also has its own problem: if you are testing people more often they may learn to do better on the tests! Ultimately one has to correct for both effects. This is what the baseline cross-sectional vs longitudinal effects look like:

This is at an aggregate level, individually unsurprisingly there is a lot of variation. One person aged 75 or older can still be more mentally agile that someone who is 50. To turn it back to the case of science, lithium-ion battery pioneer John Goodenough is 98 years old and still leading his lab and while he's not as lucid as he used to (One of his PhD students tells me), he has managed to maintain the lab at the forefront of the field. Plus he did his Nobel Prize-winning work when he was over 50. He also recently has tried to chase high risk science, perhaps so risky that it may violate the laws of thermodynamics and actually not work (The battery will be commercially developed so that's when we will know if it succeeded). In any case, he's risking his reputation to try something new, contrary to the stereotype of the old scientist that turns conservative and risk averse. As a thought experiment, if you could choose between the status quo or prematurely retiring John Goodenough + adding a new young PhD student to the field, it seems the former is to be preferred. The lab headed by George Church (He's 66) is another example of great work being done later in life.

Note that this decline is real no matter how you use your brain: IQ at young age predicts IQ at older age regardless of profession or engagement in "cognitive-intellectual" abilities, so we may safely infer that these curves apply to scientists in particular as they do for the general population, with the caveat that their starting points will be typically higher, so the average scientist at 70 years old will be better functioning that the average person of the same age.

Creativity

Intelligence is not all that matters, plausibly for scientific skill one should look at how creativity changes. Creativity is a flimsier and less rigorously constructed construct compared to intelligence so these results are not as robust as those for intelligence. How do you test creativity? You can ask people to come up with alternative uses for a given object for example. In a sample of 293 people covering ages 15-64 there was no effect of age to be found while a study looking at a sample of 150 people, including even older people found strong age-dependent effects, with older subjects scoring lower (Figure 3) in a different test.

Simonton, a known researcher in the field of creativity finds a pattern of decline similar to that of IQ; a pattern he claims fits the whole range of creative endeavors from artists to scientists. Fitting with the patterns for crystallized and fluid intelligence, for scholars (think historians) the decline is less noted, perhaps because for History in particular raw knowledge of a broad range of facts is more important than being able to rapidly generate creative new hypothesis and inventions, so older historians can still contribute. Seligman, Forgeard and Kaufman (2016) point to a general decline of most abilities that one may associate with creativity, from intelligence (already discused) to originality, or mind-wandering / having task-unrelated thoughts. Like Simonton, they say older subjects can still be creative by leveraging their wisdom in the forms of heuristics. In the case of science, a younger researcher may be able to rediscover from first principles how to do something in a way an older one cannot, but by force of decades of doing the work, a veteran scientist can just see it, pattern matching to previous examples. But this is no substitute for sparks of insight. After all these heuristics are based on previous examples. If there's something new that doesn't fit past experience, the heuristic may regard that as implausible, whereas a younger scientist reasoning from first principles may think it's doable. This may be the source of Clarke's first law,

When a distinguished but elderly scientist states that something is possible, he is almost certainly right. When he states that something is impossible, he is very probably wrong.

Scientific productivity and age

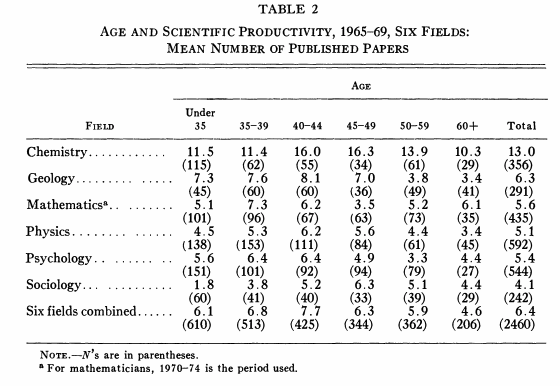

Ok that was for cognitive skills in general (As I couldn't find a study looking specifically at scientists). Based on that it would be theoretically possible that older scientists can make up for their loss of fluid cognitive skills with knowledge and heuristics that they accrue over their lifetime. So to understand better the relation between age and contributions to science one we have to narrow down to studies that look specifically at scientists. Out of these there are various types, the most simple ones just measure number of papers written per year. By that metric, some work find that scientists increasingly publish until they turn 50, initiating then a slow decline. Moreover, this last paper also finds that around age 40 the age of the literature these scientists cite starts to increase, reflecting that they may not be keeping up with more recent trends, working inside an established paradigm instead. The paper also says that even until later in life scientists keep publishing high impact work (As measured by citations).

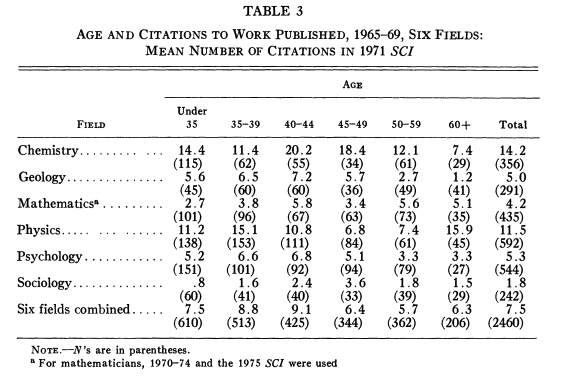

In older work, Cole (1979) found that there is a similar pattern at work across a range of disciplines spanning mathematics to sociology.

With citations a pattern is less clear, and to my view compatible with the likelihood of producing highly cited work at any point in life being similar. Citations is not that great of a proxy, because it's also considering writing reviews (Which are not cutting edge science, yet they can be highly cited).

Even when looking at mathematics, a field where we may expect younger scientists to have an advantage (In as much as mathematics relies more on fluid intelligence relative to say, biology), Cole examines a cohort of mathematicians as they publish over the years and finds no correlation with either citations or papers published at each age. He also finds that publications at one point in time predict publications later, so "strong publishers" in their years immediately after their PhD can be expected to continue to be so decades after.

This remains to this day the accepted view for scientific productivity broadly speaking, known as the random impact rule, that highly impactful work may come at any time, and when it does it tends to be followed by more within the next ~4 years, a "hot streak" effect. For most scientists (68%) this happens just once, so if a scientist has already produced a burst of highly cited work, that probably won't happen again for that individual scientist. Nobel Prize winners are peculiar in that for them having two of these hot streakas is more common, each of which lasts for longer (5.2 years) than for the average scientist.

Highly impactful contributions

This may not be true for contributions directly judged to be impactful (like the Nobel Prize), the far right tail of the distribution of all scientific work. Li et al. (2019) and Li et al. (2020) find that the random impact rule also applies to the work that Nobel Laureates do in general with one crucial exception: The work that leads to the Nobel Prize tends to happen earlier in life, against what the random impact rule would predict. If that work is removed from their careers, Nobel Laureates' work also follows the random impact rule, which the authors interpret as a bias in the data: because the Prize cannot be awarded posthumously, there will be fewer older researchers represented in it.

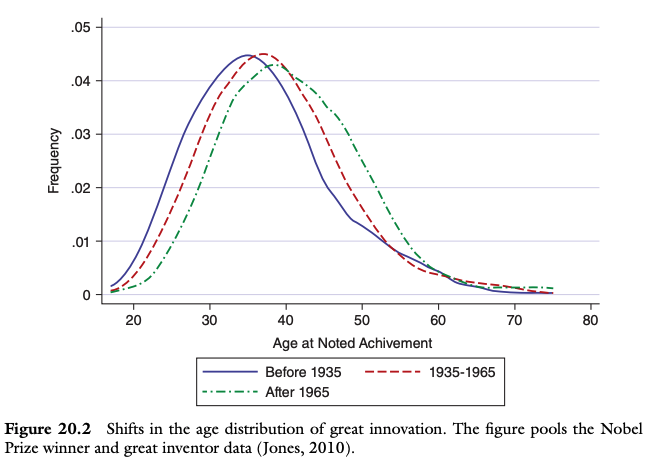

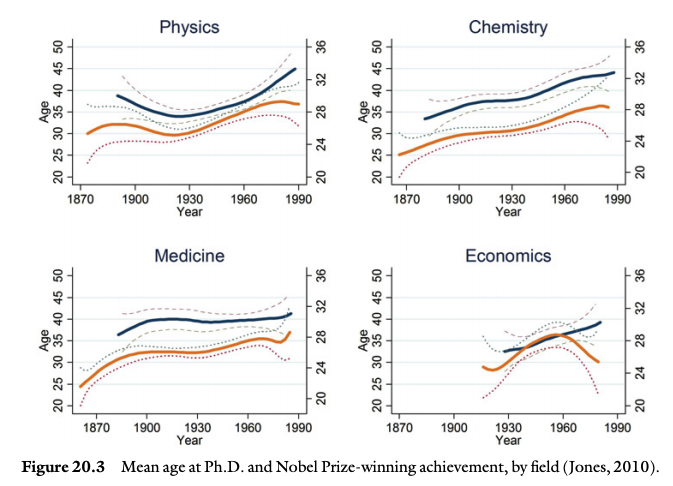

The average age when Nobel-winning research post 1995 is done is 44 (Bjørk, 2019), an age that used to be lower in the past (see also chapter 20 from the Handbook of Genius) and that is a bit lower (42) for physics. The pattern is similar for both Nobel Prize winners and great inventors.

What about Einstein though? The birth of modern physics is the exception that confirms the rule and that can be eyeballed in the data, again from chapter 20.

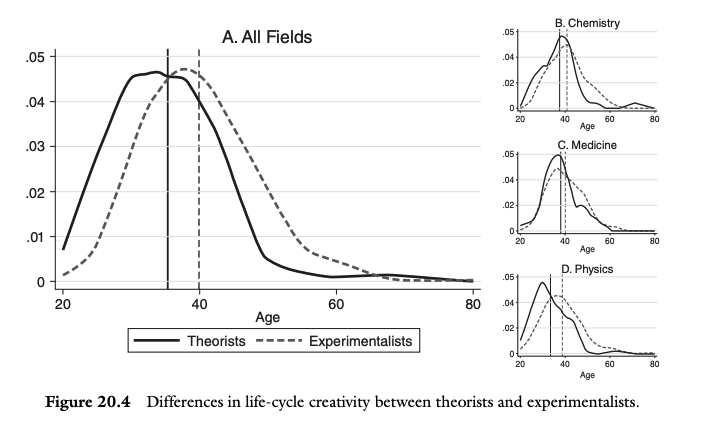

The rationale for this is, following the same source, that theoretical breakthroughs are usually done by younger scientists, perhaps because experiments require deeper knowledge of the field and theory can be done from an armchair (Think of Galileo and Einstein's Gedankenexperiments). In the case of modern physics, during that period of change it may have been that old theories and heuristics are suddenly invalid, leaving room open for those younger scientists, and preventing older scientists from using their comparative advantage. That naturally leads to the next question: Is this just because the older scientists are relatively less able to do novel work? Or is it because they don't want to; namely because they reject the new paradigm?

Dietrich and Srinivasan (2011) look not at when Nobel Laureates published their key work, but at when the insights leading to that work happened, by definition earlier, and in their view sufficiently early to shift the curves further to the left so much that they conclude that paradigm-busting ideas occur overwhelmingly to people in their 20’s and early 30’s, as indication that a nimble prefrontal cortex, and thus chronological age, is a critical factor. They also examine whether career age (As opposed to chronological age) determines when a scientist will have Nobel-worthy ideas and find a sharp drop off after the second decade of being active in a field, with most of the ideas occurring during the first year. However, their paper does not break down patterns by time, so it's still possible that more recent Laureates are older than what they suggest; nor does it account for the bias introduced by the non-existence of posthumus prices.

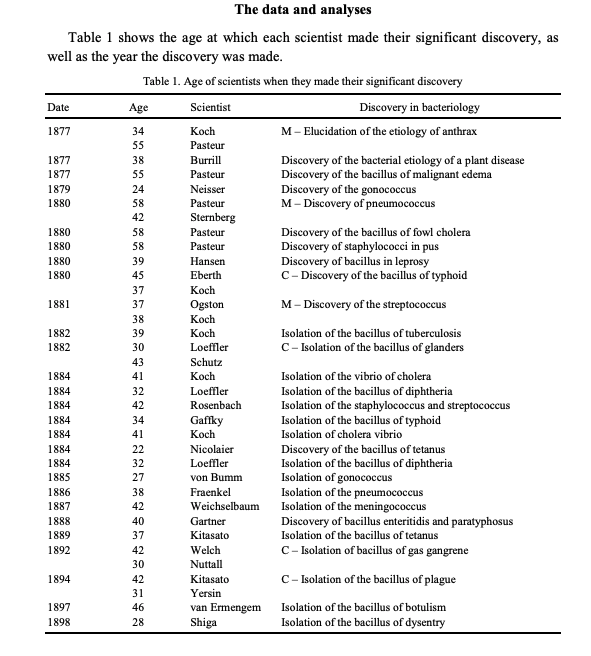

The findings here are aggregated and one can zoom in to particular episodes in the history of science where it wasn't young scientists doing the key advances but middle-aged ones. One such case described in Wray (2004) is the development of bacteriology in the period 1877 to 1898.

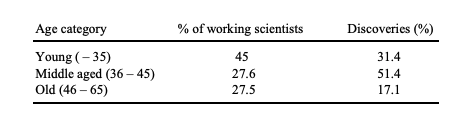

But still! Do note that while this skews the discoveries towards middle-aged scientists, it does also show that older scientistists are even less likely to have contributed in this particular episode:

A more recent stab at this problem is Packalen & Bhattacharya (2015). What they do is take all biomedical publications from 1946 and compare the tendency of young and old scientists to cite new ideas2 . They find that indeed younger scientists do cite novel ideas more often but in a team setting, a combination of a young and more experienced authors lead to the largest effect, more than what a team of young scientists would produce, and probably the standard combination one finds in science, with an older PI and younger postdocs. The magnitude of the effect is that researchers 40 years into their careers cite new ideas somewhat less (15%) than the researchers just starting out would (22%).

So in conclusion, it looks like very impactful work can happen at any point in life.

Are older scientists less receptive to new ideas?

Stated at the beginning of this post, Planck's principle states that older scientists are not so much convinced that they are wrong, but that they rather take down their wrongness with them when they die. There is some a priori reason to think that this is true from the considerations above but then one could argue that having accrued more knowledge, and if there's a tenure system in place allowing older scientists to freely pursue new ideas without fear of being fired3 , having obtained it could also be beneficial to finding and pursuing new ideas. So it's ultimately an empirical question!

One route is to look at particular episodes in the history of science and see how scientists of different ages reacted. Take Darwinism. Hull et al. (1978) studied whether acceptance was faster among younger relative to older scientists. Recall that Darwin himself thought that it was the younger ones that were more open to natural selection. Ten years after the publication of Darwin's book, 50 of the 67 scientists in their sample had been converted; and by that time the average age of the accepters was 39.6 vs 48.1 for those who remained unconvinced, but these averages hide lots of variability and ultimately age explains less than 10% of the variation in acceptance rates. Moreover, considering those scientists that accepted the theory, age did not seem to matter for how early they came to accept it. Other studies looking at the adoption of cliometrics, or plate tectonics find similar results, either the effect is small or there is no effect at all (Levin 1995). There is no effect either if one considers a new development that ultimately was not correct (polywater), young scientists were no more likely to buy into it (Diamond 1988).

Chapter 26 of the Handbook of Genius, Openness to scientific innovation has a larger sample size, spanning 28 scientific controversies from 1543 to the 1960s, covering almost four thousand scientists. And here there does seem to be an effect from age, as well as a metric of social attitudes (being liberal). What did not correlate with being an early adopter was eminence except in some cases; but when controlling for age, (rated based on awards and prizes won) eminence was negatively associated with being less likely to adopt Darwinism.

A scientist who was younger than the mean age of the sample was 1.6 times more likely to support a new scientific innovation than were scientists who were above the mean;[..] Comparing supporters with opponents, the mean age difference was 14.6 years. Among the most committed supporters and opponents, however, the age disparity was almost twice as large.

This however is the average of a very heterogeneous sample:

Statistical heterogeneity occurs when the effect sizes for a predictor such as age differ substantially from one historical event to another. Evidence for heterogeneity generally points the way to important moderator effects. As an example, the correlation between age and acceptance of new theories in science varies from −.50 during the reception of Freud’s psychoanalytic ideas (n = 218, p < .001), with younger people showing greater support for these theories, to +.09 during 19th-century vitalistic efforts to refute the doctrine of spontaneous generation – a conservative stance that generally appealed to older scientists (n = 43, p = .55).

This lends some support to Planck's principle; but it leaves questions unanswered. Perhaps it used to be the case that age correlated with less openness to new ideas but it doesn't anymore; and in any case it's not a universal feature of every controversy. The paper doesn't look at whether the effect has changed over time.

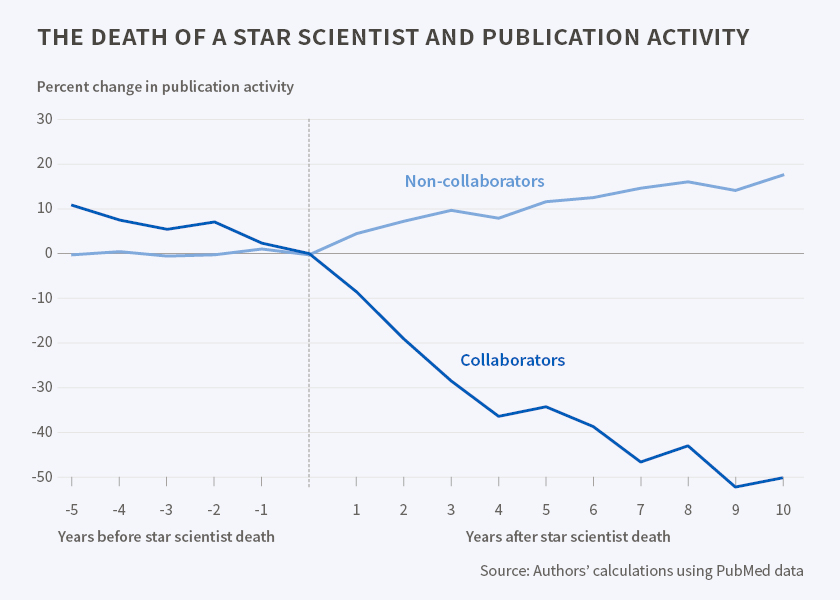

The latest attempt at trying to assess this question, Azoulay et al. (2019) who ask a related, but different question, they ask not if being old makes scientists less likely to convert to new theories, but whether when a "star scientist" dies you see changes in that field. What they find is that it's the case, and they interpret this as evidence that the eminence of such scientists prevents outsiders (from other fields) that may have different perspectives from contributing to that one field. What they exactly test is (in the life sciences and scientists born 1899 and later):

how the premature death of 452 eminent scientists alter the vitality (measured by publication rates and funding flows) of subfields in which they actively published in the years immediately preceding their passing, compared to matched control subfields. [...]

To our surprise, it is not competitors from within a subfield that assume the mantle of leadership, but rather entrants from other fields that step in to fill the void created by a star’s absence. Importantly, this surge in contributions from outsiders draws upon a different scientific corpus and is disproportionately likely to be highly cited. Thus, consistent with the contention by Planck, the loss of a luminary provides an opportunity for fields to evolve in novel directions that advance the scientific frontier.

One could take this to be a good exemplar of Planck's principle. After all Planck didn't say anything about youth, just that some recalcitrant individuals exist that are not convinced, they get replaced by newcomers. Those newcomers need not be young and the incumbents need not be old. In the paper itself they also try to see if the effeect is driven by age (p. 25) but this does not seem to be the case.

The paper itself acknowledges that these contributions do not so much overthrow existing paradigms but more like extend or complement them,

It is important to note, however, that the findings above do not imply that the published results of entrants necessarily contradict or overturn the prevailing scientific understanding and assumptions within a subfield. We provide indirect evidence regarding these contributions’ disruptive impact by leveraging a measure recently proposed by Funk and Owen-Smith (2017). Their index captures the degree to which an idea consolidates or destabilizes the status quo, by measuring whether the future ideas that build on the focal idea also rely on its acknowledged predecessors. The results in Table E4 of Appendix E suggest that these contributions do not radically disrupt the subfield. Rather, they appear to reflect the impact of a myriad “small r,” permanent revolutions whereby new ideas come to the fore without necessarily eclipsing prior approaches. [...]

Taken together, these results suggest that outsiders are reluctant to challenge hegemonic leadership within a field when the star is alive. They also highlight a number of factors that may constrain entry even after she is gone. Intellectual, social, and resource barriers all seem to play a role in impeding entry, with outsiders only entering subfields whose topology offers a less hostile landscape for the support and acceptance of “foreign” ideas.

The magnitude of the effect estimated by Azoulay is nontrivial; fields where a "super star" dies see 8.55% more publications from scientists that are not collaborators of the super star (Table 3) than would be expected; contributions from collaborators go down 20%. Funding goes up and down by similar magnitudes respectively (All of this is per year). The number of high impact (>99th percentile) publications by non-collaborators goes up by 37% (Table 4) which is even more astonishing, even triggering my effect is too large heuristic. Looking at the field in absolute terms and on a longer term view the difference is staggering

Another interesting finding is in Figure E1 (p. 81) that shows that fields on average in a normal-curve way are born and decay, and the effect of this trends completely overshadows anything studies in the paper. Figure E4 shows that for these superstar scientists, that productivity increases up until the age of ~45 and then it slowly declines. This decline is more marked for the top 1% of publications; that is, on average scientists will increase their odds of publishing a highly cited paper somewhat until ~45 and then this rate of production of good work initiates a steady decline. This fits with the idea that in general age doesn't matter for producing highly cited papers, but it does for more demanding standards than that.

Conclusion

Yes, scientists getting older is bad news. At the micro level, cognitive decline is real and at the macro level it does seem that Nobel Prize-worthy achievements are disproportionately done by younger scientists. However this is not as bad news as it may initially seem. It's not that only young scientists can do it; or that it's almost all of those creative scientists are young. A naïve historically informed view of the subject is biased: Yes historically it has been a lot of very young (under 30) scientists doing that key research but times have changed. Young researchers have as a comparative advantage their higher fluid intelligence, and when there isn't that much to learn (Completely new fields open up, or we didn't know that much about the world as it was the case two centuries ago) then that negates the comparative advantage of older scientists, that they do know more and can use experience-based heuristics to guide their research (i.e. the heuristics about what's promising in a Newtonian paradigm may not work when applied, back then, to the nascent modern physics paradigm).

As we have accumulated more knowledge, the background knowledge required to make a contribution has increased and concurrently has the age new scientists start practicing at, as well as the age when they do their high impact research. The ideal researcher would be young and have a deep knowledge of their field but gaining that knowledge takes time. This profile is very rare; perhaps this is why the random impact hypothesis is true while at the same time Nobel Prizes are somewhat younger, knowledge and intelligence are inversely correlated within individuals and the outliers that escape from this are the ones who get Nobel prizes.

Research on Planck's principle shows that there is some truth to it, especially on the light of the research contained in Chapter 26 of the Handbook of Genius (with the caveat that they didn't look into historical trends). What is going on right now (The dynamics that the Azoulay paper is capturing) with older or eminent scientists is not so much resistance to adopt a new "paradigm" but failure to take into consideration new ideas or perhaps using new methods. So suppose that a key tenet of a field gets upended; I wouldn't expect older scientists to be particularly late in accepting the new view. Rather, where they may be slow is in noticing that they could achieve more just if they tried this new method they have never used before, or if they imported some knowledge from elsewhere.

To sum up,

- The aging of science is driven by a combination of factors including the aging of society in general, the aging of the baby boom cohort in particular, the end of mandatory retirement, and the increase in required knowledge to make a contribution to science. From a problem-solving point of view, this latter is the one that can be more directly addressed.

- Older scientists may not have as much energy as they did when they were younger, but in practice the actual experiments will be done by other lab members, so this one form of decline may not practice as much as it seems.

- Younger scientists are more likely to explore new ideas. This does not mean that such research is more promising or likely to be more impactful. Taking an idea to its ultimate consequences for 20 years may be more valuable than trying the new thing coming up.

- Realistically science happens in a social setting, not alone. Teams of older and younger scientists are the norm in science. It may be the case that if everyone is markedly old (or young) we'd see issues with the productivity of a lab (on average), but we are far from this scenario.

- Productivity (count of papers published per year) increases up until ~40-50 and then declines with age

- In general, the likelihood of producing a highly cited paper stays constant through life (The random impact rule). There is a small chance that in some historical periods (The transition to modern physics) this is not true, and younger researchers are more likely to do this kind of highly valuable research instead.

- The transition into modern physics represents a unique event that opened up opportunities for younger scientists of that age. But from the fact that they did make a lot of key discoveries we should not infer that it is only them that could have done so, or that it is only young scientists that can make those discoveries in general.

- The "ideal age" where a scientist has the best change to achieve Nobel-worthiness is field dependent; great mathematicians and physicists are younger than great biologists and chemists.

- Historically older scientists were probably less likely to buy into a new paradigm in general, but this varies a lot by field, and it's unclear if it continues to hold. Inasmuch as all eminent scientists are older, eminence makes scientists less likely to adopt new methods or ideas from other fields. A study of recent "paradigm shifts" would be needed to see if this continues being true.

How may one leverage this knowledge to make science great again? Well, despite all the heterogeneity and individual differences involved in points 1-9, it seems clear that if I am handed a pile of papers from two researchers and I'm told their ages, the research is going to be more informative about who is doing interesting stuff relative to their age. It's very easy to point to older scientists that are doing the best work of their field.

So if you wanted to give money to scientists, to go back once again to Scientific Freedom, how old were the scientists Braben picked as promising to do something new and interesting? Table 13 of the book (p. 116) has 25 Venture Researchers and when they did their BP-sponsored research, here's how old they were when they were selected. In some cases I'm making some assumptions when I can't find their age (i.e. I assume they start their undergraduate degree at 18, if I can't find that then I find their first paper which I assume was published the same year when they completed their PhD, and I assume a PhD takes 4 years). These assumptions introduce some bias of perhaps +/- 4 years so nothing terrible to get a quick view.

| Scientist(s) | Age at funding |

|---|---|

| Mike Bennett, Pat Heslop-Harrison | 42, 27 |

| Paul Broda | 44 |

| Terry Clark | 40 |

| Stan Clough, Tony Horsewill | 59, 38 |

| David Cooper, Joe Gerratt | 37, 52 |

| Adam Curtis, Chris Wilkinson | 51, 45 |

| Steve Davies | 34 |

| Edsger Dijkstra, Netty van Gasteren, Lincoln Wallen | 51, 33, 27 |

| Peter Edwards, David Logan | 38, 31 |

| Nigel Franks, Jean Louis Deneubourg, Simon Goss | 34, 37, 26 |

| Dudley Herschbach | 56 |

| Herbert Huppert, Steve Sparks | 40, 40 |

| Andrew Keller, Ted Atkins, Peter Barham | 59, 44, 34 |

| Jeff Kimble | 34 |

| Graham Parkhouse | 42 |

| Alan Paton, Eunice Allan, Anne Glover | 60, 25, 26 |

| John Pendry | 39 |

| John C Polanyi | 54 |

| Martyn Poliakoff | 41 |

| Alan Rayner, John Beeching | 37, 30 |

| Ian Ross | 58 |

| Ken Seddon | 38 |

| Colin Self | 38 |

| Gene Stanley, José Teixeira | 49, 42 |

| Harry Swinney, Werner Horsthemke, Patrick DeKepper, Jean-Claude Roux, Jacques Boissonade | 46, 33, 40,?, 35 |

| Robin Tucker, David Hartley, Desmond Johnston | 48,25 ,30 |

What we see from the list is that most of the young scientists in the list were students of the older scientists that got the award. The youngest scientist funded that was "independent" was 31, and the oldest scientist funded was 60 (Alan Paton). Overall this does not seem a sample that's heavily biased towards young scientists. Even back in 1980, going by NIH standards, the average age at which scientists get an R01 was 35.7. Counting the PIs in Braben's list they are, if anything, older.

So looking at the picture from before,

back in 1980 you had the entire distribution peaking before the 40s. Now researchers under 40, or even 50 are not the majority. This may be unfair to new researchers coming into the field (Given a limited amount of funding) but from an efficiency point of view it is unclear that the aging of the scientific workforce by itself leads to a reduced number of "paradigm shifts", "breakthroughs, "Nobel-worthy reserach" or anything in that class of outputs.

Citation

In academic work, please cite this essay as:

Ricón, José Luis, “Was Planck right? The effects of aging on the productivity of scientists”, Nintil (2020-12-16), available at https://nintil.com/age-and-science/.

Comments